Introduction

Web crawling has emerged as an essential tool for businesses eager to tap into the vast ocean of information available online. Since the introduction of Python in 2025, web scraping has transformed, presenting innovative techniques and powerful libraries that simplify data collection. Yet, as the web crawling landscape grows more intricate, how can one effectively navigate the ethical considerations and technical challenges that come into play? This article outlines four crucial steps for mastering web crawling with Python, equipping you with the knowledge to optimize your data-gathering strategies effectively.

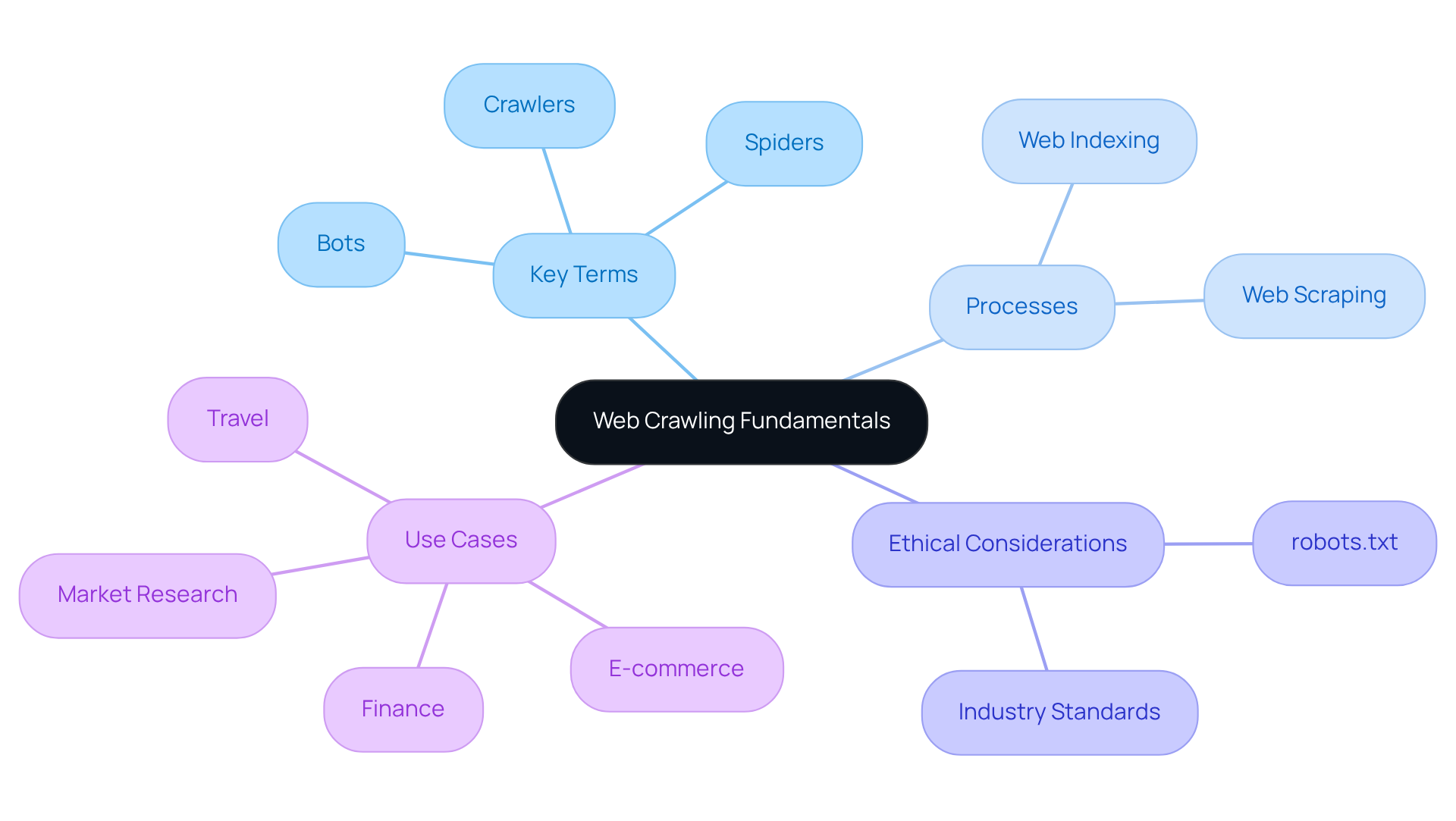

Understand Web Crawling Fundamentals

The process of web crawling with python 2025 is an automated method that systematically browses the web to gather information from various websites. This technique, particularly web crawling with python 2025, is essential for collecting vast amounts of data efficiently, empowering businesses to make informed decisions based on real-time insights. Websets leverages AI-driven market insights to enhance lead generation and market research, enabling sales teams to identify and connect with potential clients effectively through detailed company profiles and financial information.

Key terms in web crawling include:

- Bots: Automated programs that perform tasks on the internet, such as collecting data.

- Spiders: A type of bot specifically designed to index content from web pages.

- Crawlers: Programs that navigate the web to gather information, often used interchangeably with spiders.

It's crucial to differentiate between web indexing and web scraping. While indexing involves finding and cataloging web content, scraping focuses on retrieving specific information from web pages for analysis or application. Websets' platform supports both processes, offering comprehensive insights into startups and market trends.

Understanding the structure of web pages-comprising HTML, CSS, and JavaScript-is vital for crawlers. These elements dictate how content is displayed and how crawlers can access and interpret it. Websets utilizes this knowledge to enrich data about companies, enhancing the quality of insights available to users.

Respecting robots.txt files is a fundamental ethical consideration in web exploration. This file informs crawlers about which parts of a website can be accessed, ensuring compliance with the site owner's preferences. The evolving standards being developed by the Internet Engineering Task Force further underscore the importance of ethical practices in this area.

Common use cases for web crawling span various industries, including:

- E-commerce: Monitoring competitor pricing and product availability.

- Travel: Aggregating hotel and rental prices from platforms like Booking.com and Airbnb.

- Market Research: Gathering information for trend analysis and consumer insights, which Websets excels at through web crawling with python 2025 to provide deep insights into companies and industries.

- Finance: Collecting real-time information for investment analysis.

Statistics reveal that Googlebot's share of indexing traffic surged from 30% to 50% between May 2024 and May 2025, highlighting the increasing reliance on automated information-gathering techniques. Additionally, PerplexityBot experienced an astonishing 157,490% rise in requests, emphasizing the growing demand for real-time web information.

As industry specialists emphasize, understanding the nuances of bots, spiders, and crawlers is essential for refining web exploration strategies and ensuring efficient data gathering, particularly when utilizing platforms like Websets.

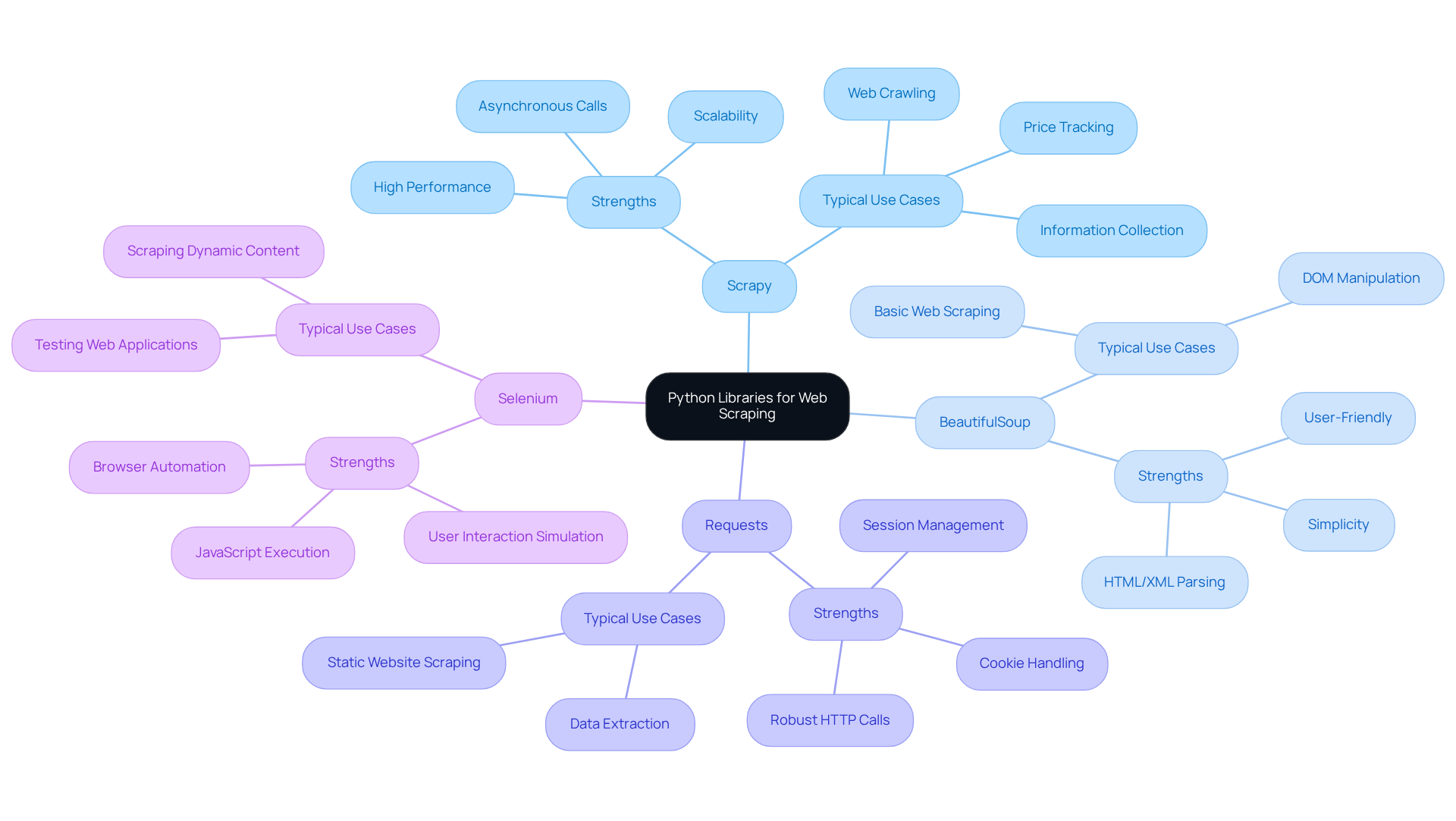

Select Appropriate Python Libraries and Tools

Researching popular Python libraries for web scraping reveals a range of powerful tools, including Scrapy, BeautifulSoup, Requests, and Selenium. Each library boasts unique strengths tailored to various project needs.

Scrapy stands out for its high performance and scalability, making it the go-to choice for web crawling with Python 2025 in large-scale projects. It facilitates asynchronous calls, efficiently retrieving structured information. For instance, web crawling with Python 2025 is often achieved using Scrapy to create web crawlers for price tracking and information collection, showcasing its capability to handle thousands of simultaneous inquiries.

On the other hand, BeautifulSoup is celebrated for its simplicity and user-friendliness, making it ideal for beginners. It excels at parsing HTML and XML documents, allowing users to navigate and manipulate the DOM structure with ease. For straightforward web scraping tasks, combining BeautifulSoup with Requests is frequently recommended due to its intuitive API.

Requests is a robust library designed for making HTTP calls, supporting all HTTP methods while managing sessions and cookies. It pairs seamlessly with BeautifulSoup for data extraction, creating a smooth workflow for scraping static websites.

Selenium, a versatile browser automation tool, excels in scraping dynamic content rendered by JavaScript. It enables interaction with web pages just like a human user, making it perfect for projects that require executing JavaScript and simulating user actions.

To install these essential libraries, simply use pip with the command: pip install scrapy beautifulsoup4 requests selenium.

Setting up a virtual environment is highly recommended to manage dependencies effectively. This practice ensures your project remains organized and free from conflicts.

For those tackling advanced scraping tasks, consider exploring additional tools like Playwright. This tool offers robust browser automation capabilities and supports multiple browsers, further enhancing your web scraping toolkit.

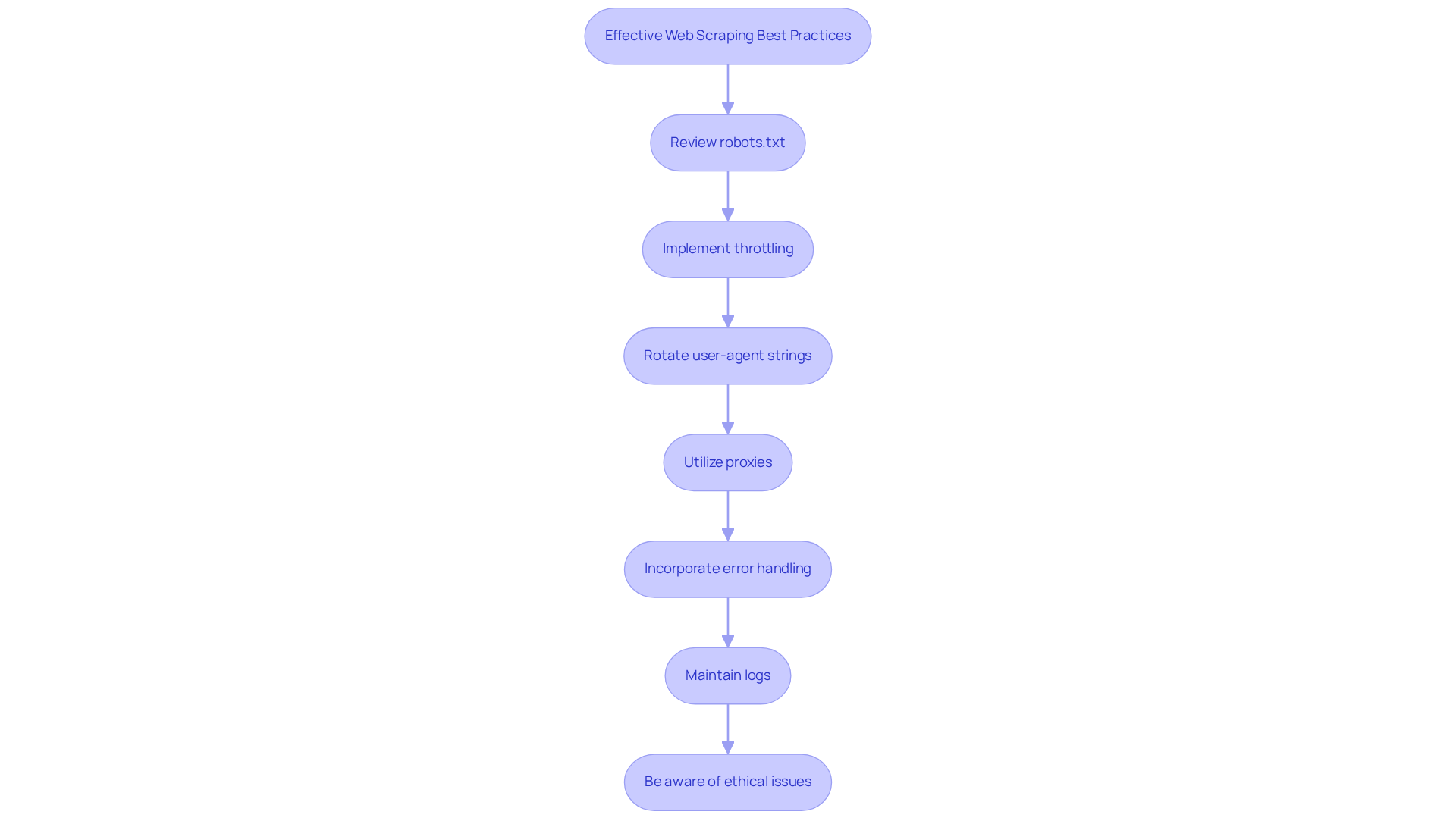

Follow Best Practices for Effective Web Scraping

-

Always review the website's robots.txt file. This crucial document outlines the crawling permissions and restrictions, helping you manage crawler traffic effectively. Ignoring it can lead to serious consequences, such as IP blocking or server overload.

-

Implement throttling to prevent overwhelming the server. This means managing the frequency of submissions, which differs from rate limiting that merely slows down submissions. For instance, using

time.sleep()between calls can help maintain a steady flow of traffic, reducing the risk of server overload and enhancing user experience. -

Rotate user-agent strings to mimic submissions from various browsers. This tactic can help you evade detection and potential blocking by the target site, ensuring smoother operations.

-

Utilize proxies to distribute inquiries across multiple IP addresses. This strategy minimizes the risk of IP bans and facilitates more efficient scraping.

-

Incorporate error handling by implementing retry logic for failed requests. This approach significantly improves the robustness of your scraping process, allowing for greater reliability.

-

Maintain logs of your crawling activities. This practice is essential for effective troubleshooting and analysis, enabling you to optimize your scraping strategies.

-

Be aware of the ethical and legal issues surrounding information scraping. Compliance with regulations is not just a best practice; it's essential for responsible operations.

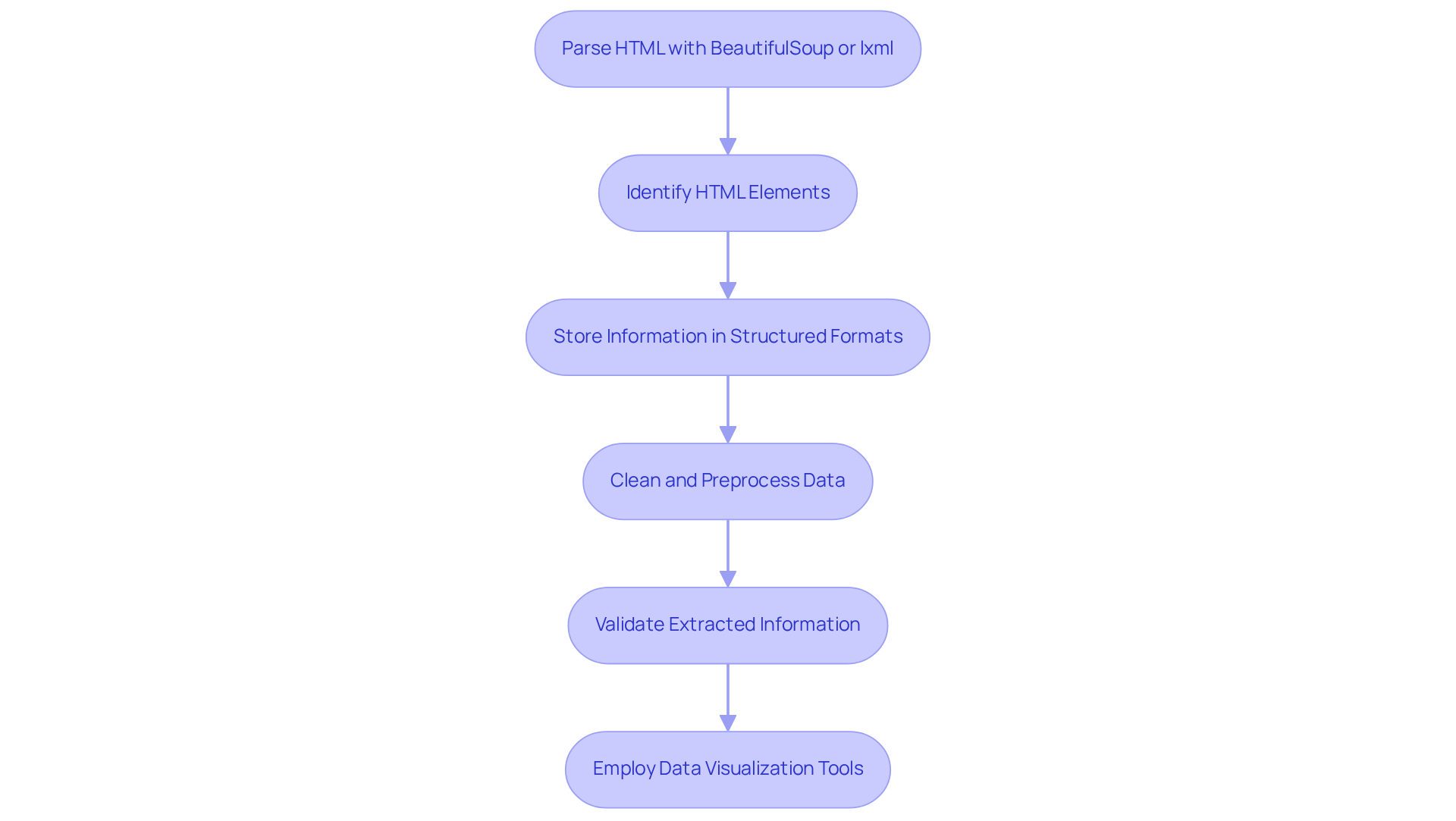

Implement Effective Data Extraction Techniques

- Leverage BeautifulSoup or lxml to effectively parse HTML content and extract crucial information points.

- Identify the specific HTML elements - such as tags, classes, and IDs - that contain the desired information.

- Store the extracted information in structured formats like CSV, JSON, or databases to ensure easy access and usability.

- Clean and preprocess the information meticulously to eliminate duplicates and irrelevant content, enhancing data quality.

- Validate the extracted information rigorously to guarantee accuracy and completeness, which is essential for reliable analysis.

- Consider employing data visualization tools to analyze and present the extracted data effectively, making insights more accessible and actionable.

Conclusion

Web crawling with Python in 2025 is not just a trend; it’s a crucial strategy for data collection that empowers businesses to leverage the internet for informed decision-making. Understanding the fundamentals of web crawling, selecting the right tools, and adhering to ethical practices are essential for developers aiming to create efficient crawlers that navigate the vast digital landscape.

Key insights reveal the necessity of distinguishing between web indexing and scraping. Choosing appropriate Python libraries, such as Scrapy and BeautifulSoup, is vital. Moreover, respecting ethical guidelines, including robots.txt files, cannot be overlooked. Implementing strategies like throttling, user-agent rotation, and robust error handling ensures that web scraping is both successful and responsible.

As the demand for real-time information escalates, the ability to effectively crawl and extract data becomes increasingly vital across various industries. Embracing these techniques not only enhances data quality but also positions businesses to thrive in a competitive market. The journey into web crawling with Python transcends mere data collection; it’s about harnessing insights to drive innovation and success in an ever-evolving digital world. Are you ready to take the leap and transform your data strategy?

Frequently Asked Questions

What is web crawling with Python 2025?

Web crawling with Python 2025 is an automated method that systematically browses the web to gather information from various websites, allowing businesses to collect vast amounts of data efficiently for informed decision-making.

What are bots, spiders, and crawlers?

Bots are automated programs that perform tasks on the internet, such as collecting data. Spiders are a specific type of bot designed to index content from web pages, while crawlers are programs that navigate the web to gather information, often used interchangeably with spiders.

What is the difference between web indexing and web scraping?

Web indexing involves finding and cataloging web content, whereas web scraping focuses on retrieving specific information from web pages for analysis or application.

How does Websets utilize web crawling?

Websets leverages web crawling to provide AI-driven market insights that enhance lead generation and market research, allowing sales teams to identify and connect with potential clients through detailed company profiles and financial information.

Why is understanding web page structure important for crawlers?

Understanding the structure of web pages, which includes HTML, CSS, and JavaScript, is vital for crawlers as these elements dictate how content is displayed and how crawlers can access and interpret it.

What is the role of robots.txt files in web crawling?

Robots.txt files inform crawlers about which parts of a website can be accessed, ensuring compliance with the site owner's preferences and highlighting the importance of ethical practices in web exploration.

What are some common use cases for web crawling?

Common use cases for web crawling include monitoring competitor pricing in e-commerce, aggregating hotel and rental prices in travel, gathering information for market research, and collecting real-time data for investment analysis.

What recent statistics highlight the importance of web crawling?

Statistics show that Googlebot's share of indexing traffic increased from 30% to 50% between May 2024 and May 2025, and PerplexityBot experienced a 157,490% rise in requests, indicating a growing demand for real-time web information.

Why is it important to understand the nuances of bots, spiders, and crawlers?

Understanding the nuances of bots, spiders, and crawlers is essential for refining web exploration strategies and ensuring efficient data gathering, especially when using platforms like Websets.