Introduction

Web crawlers are essential tools in today’s digital landscape, forming the backbone of data collection and analysis across diverse industries. As sales leaders strive to harness the power of these automated programs, grasping their core functionalities and the technologies that drive them is vital. But here’s the challenge: how can organizations design and implement a web crawler that not only meets their data needs but also adapts to the fast-paced online environment?

This guide offers a comprehensive, step-by-step approach to building a web crawler in 2025. It empowers sales teams to leverage data-driven insights for strategic success. Are you ready to transform your data strategy?

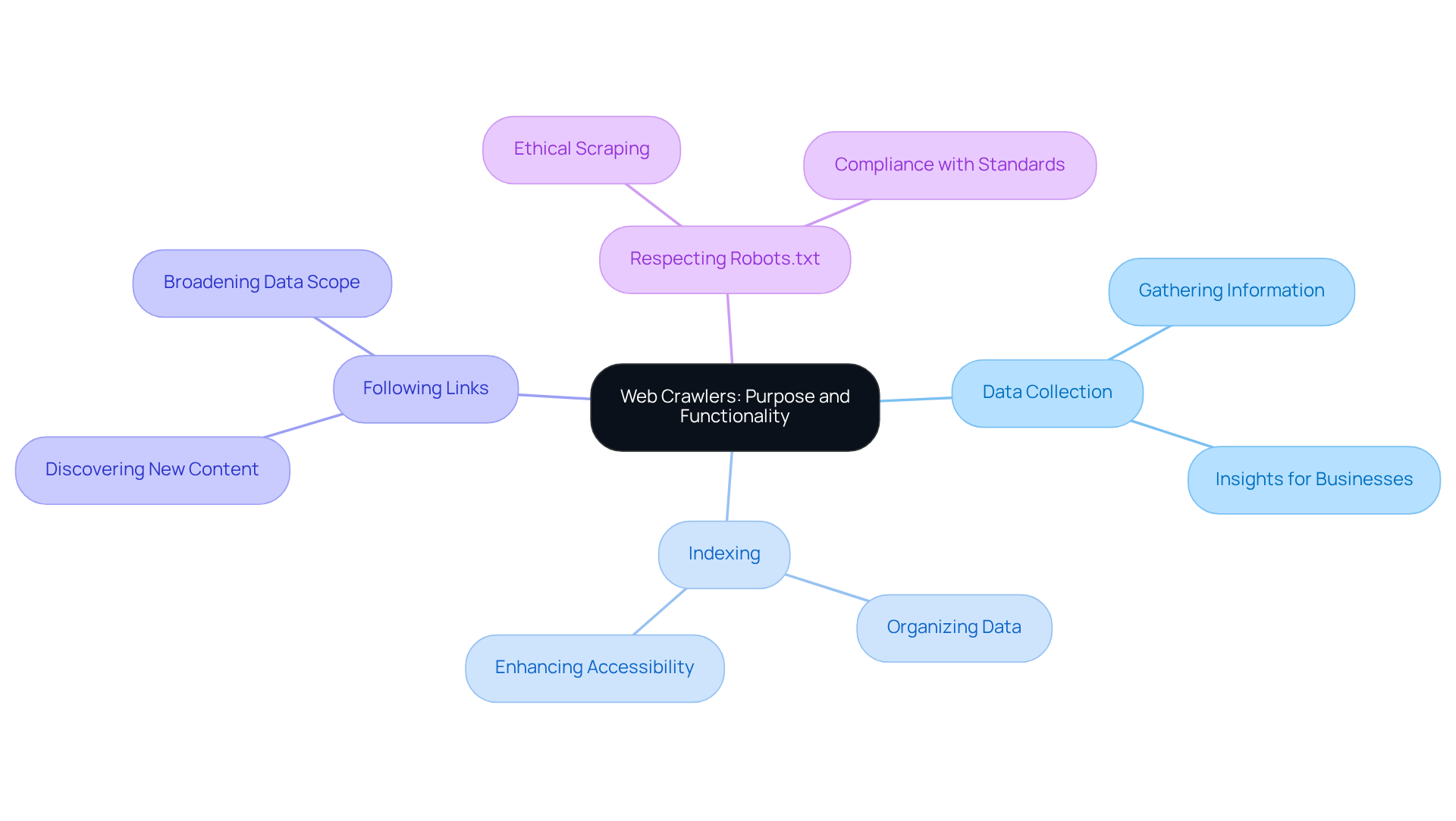

Understand Web Crawlers: Purpose and Functionality

Web crawlers, often referred to as spiders or bots, are automated programs that systematically scour the internet to collect information from web pages. Their primary role is to index content for search engines, but they also play a vital role in various applications, such as lead generation, market research, and competitive analysis.

Key Functions of Web Crawlers:

- Data Collection: Crawlers efficiently gather a wide range of information from websites, including text, images, and links. This data is crucial for businesses aiming to gain valuable insights.

- Indexing: The information collected is meticulously organized and stored, making it easily searchable and retrievable. This enhances accessibility for sales teams, allowing them to act swiftly.

- Following Links: By navigating through hyperlinks, bots can uncover new content, broadening the scope of data available for analysis.

- Respecting Robots.txt: Ethical web scrapers adhere to the guidelines set by websites in their robots.txt files, which dictate which pages can be accessed, ensuring compliance with web standards.

The impact of web spiders on market research is profound. In 2024, a staggering 73% of companies utilized web scraping for market insights and competitor tracking, highlighting its significance in data-driven decision-making. With the web scraping market projected to grow at a CAGR of 14.2%, reaching USD 2.00 billion by 2030, the relevance of these tools in sales strategies is undeniable.

Industry leaders emphasize the critical role of web spiders for sales teams. For example, 67% of U.S. investment advisers integrate web scraping into their alternative data programs, showcasing a strong reliance on real-time data harvesting to inform trading strategies. Furthermore, successful applications of web spiders in market research have been observed across various sectors, including e-commerce and finance, where they contribute to competitive intelligence and customer analytics.

Understanding these functionalities is essential for sales leaders looking to leverage web scrapers effectively. By doing so, they can enhance their lead generation strategies and improve their overall market research capabilities.

Gather Required Tools and Technologies

Creating a web extraction tool requires a strategic blend of programming languages, libraries, and tools. Here’s what you need to know:

Programming Languages:

- Python: This language is a favorite for web scraping, thanks to its simplicity and robust libraries.

- JavaScript: Essential for crawling dynamic websites that depend on JavaScript for rendering content.

Libraries and Frameworks:

- BeautifulSoup: A powerful Python library that simplifies parsing HTML and XML documents, making data extraction from web pages a breeze.

- Scrapy: An open-source framework designed for building web spiders in Python, it offers built-in support for managing requests and analyzing responses.

- Selenium: This tool automates web browsers, making it perfect for scraping dynamic content.

Development Environment:

- IDE: Utilize an Integrated Development Environment like PyCharm or Visual Studio Code to streamline your coding process.

- Version Control: Implement Git for effective code version management and collaboration.

Additional Tools:

- Postman: Ideal for testing API requests, especially if your crawler interacts with web services.

- Docker: This tool helps create a consistent development environment.

Equipping yourself with these essential tools will not only simplify the development process but also enhance your bot's capabilities. Are you ready to take your web extraction skills to the next level?

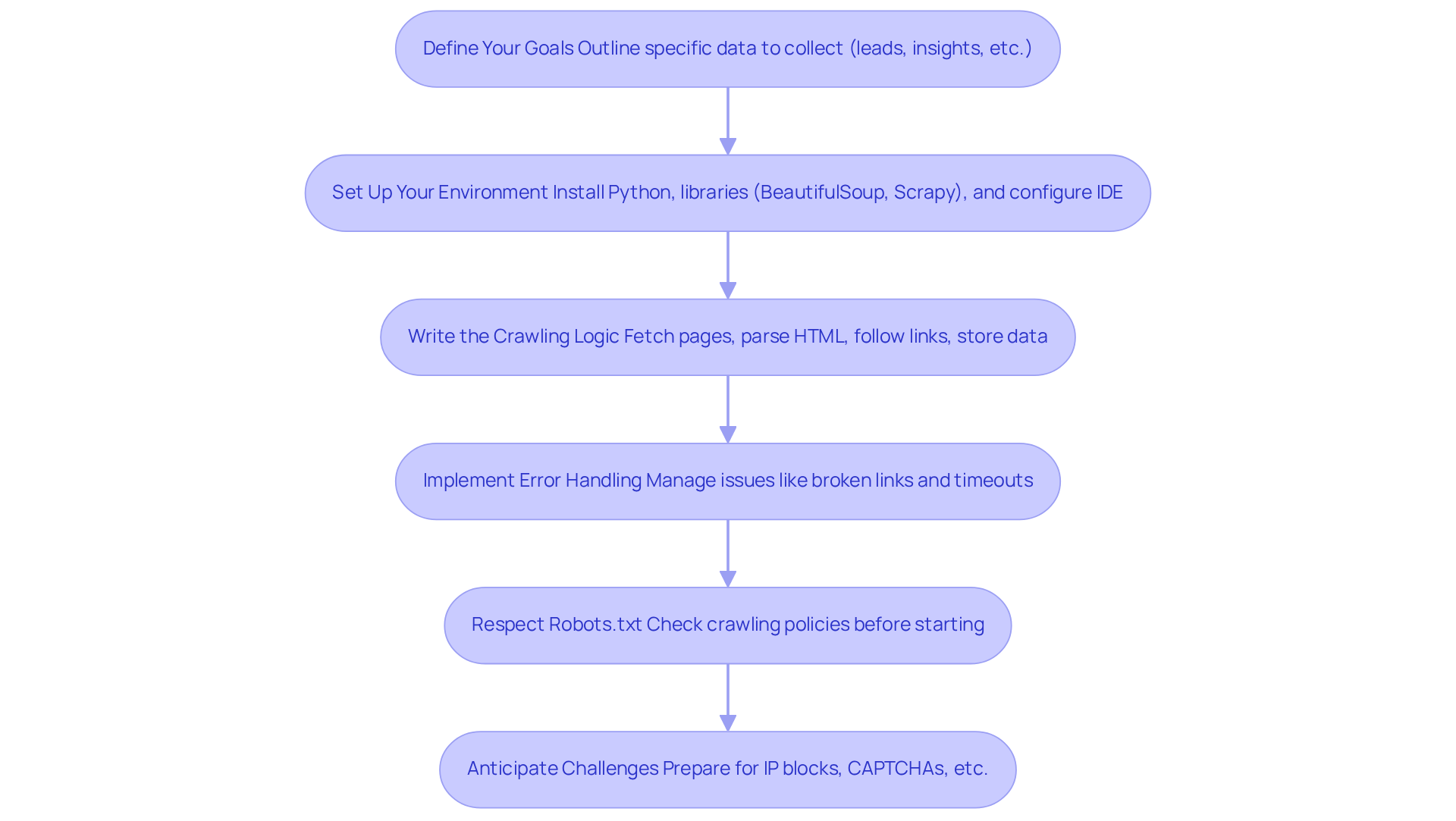

Design and Implement Your Web Crawler

It is crucial to build a web crawler 2025 effectively for successful lead generation and data collection, especially when leveraging Websets' AI-driven tools. Here’s how to do it:

-

Define Your Goals:

Clearly outline the specific data you aim to collect-whether it’s leads, market insights, or competitor information. Setting clear objectives directs your crawling approach and enhances the significance of the information collected, particularly when using Websets for deeper insights. -

Set Up Your Environment:

Start by installing Python along with essential libraries like BeautifulSoup and Scrapy. Configure your integrated development environment (IDE) and implement a version control system to manage your code effectively. Integrating Websets' AI capabilities can streamline your information collection process. -

Write the Crawling Logic:

- Fetch the Initial Page: Use the requests library to download the HTML content from your starting URL.

- Parse the HTML: Employ BeautifulSoup to extract relevant information and hyperlinks from the page.

- Follow Links: Develop logic to navigate through links to additional pages, repeating the fetching and parsing process as needed.

- Store Data: Organize the collected data in a structured format, such as CSV files or a database, for easy access and analysis. Websets can enhance this process by providing enriched profiles and insights on leads.

-

Implement Error Handling:

Equip your crawler with robust error handling capabilities to gracefully manage common issues like broken links, timeouts, or unexpected server responses. This ensures continuous operation and reliability. -

Respect Robots.txt:

Before initiating your crawl, review the target site's robots.txt file to confirm compliance with its crawling policies. Adhering to these guidelines not only prevents potential blocks but also fosters ethical data collection practices. -

Anticipate Challenges:

Be prepared for common challenges during web crawling, such as IP blocks and CAPTCHAs. Implement strategies like using residential proxies and retry logic to mitigate these issues and maintain smooth operation.

By following these steps, you’ll build a web crawler 2025 that is tailored to your prospect generation needs. In 2025, the success rates of online bots in generating prospects are expected to rise significantly, with effective implementations yielding higher quality opportunities and improved market insights. As sales leaders emphasize, organized information gathering is vital for informed decision-making and strategic planning. As Dave Elkington states, "You have to generate revenue as efficiently as possible. And to do that, you must create a data-driven sales culture. Data trumps intuition.

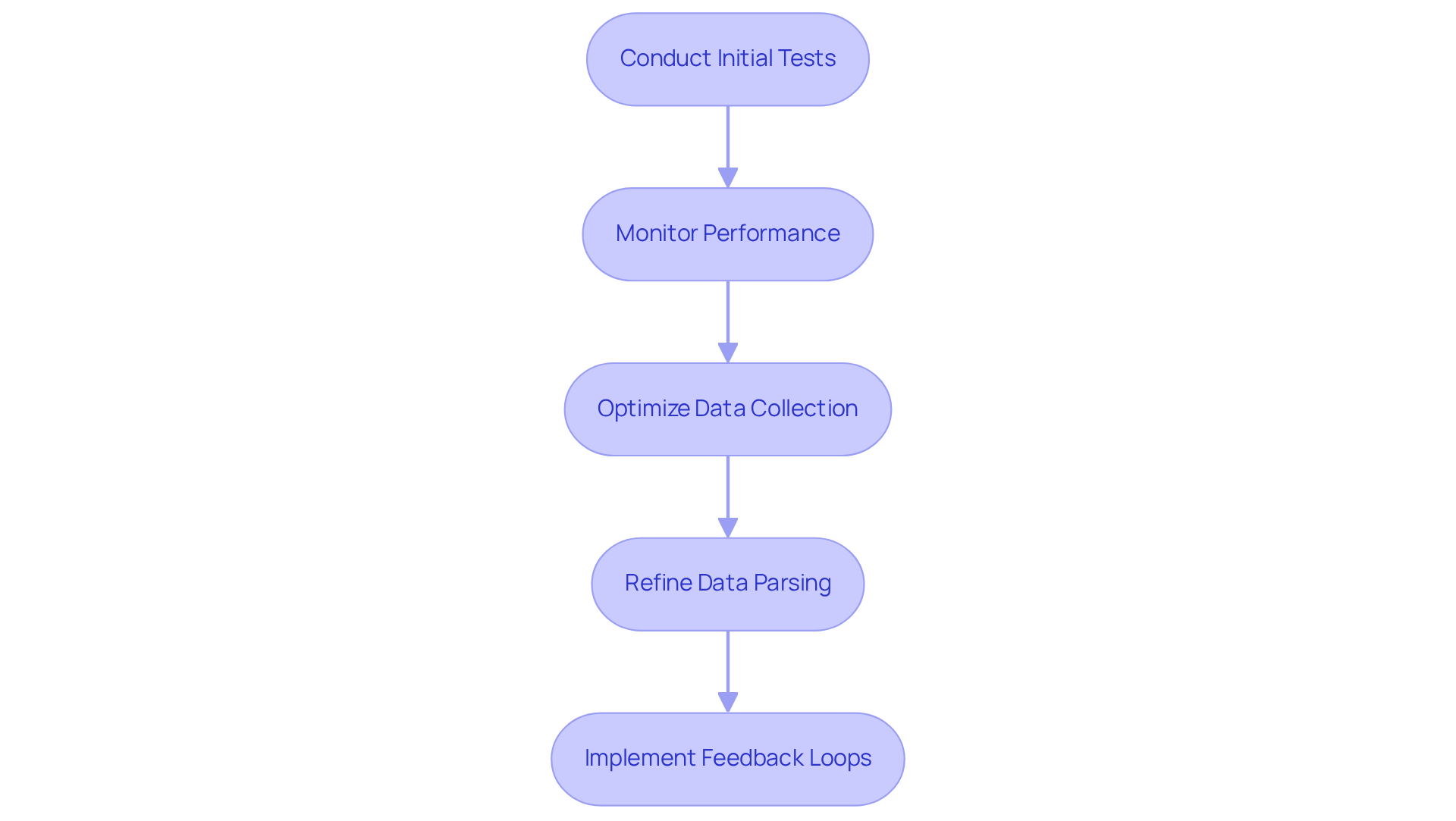

Test and Optimize Your Web Crawler

Once your web extractor is built, boosting its performance is crucial for effective information collection and lead generation. Here’s a streamlined approach to ensure your crawler operates at its peak:

-

Conduct Initial Tests

Start by running your crawler on a limited set of URLs to confirm it collects data accurately. This initial testing phase is vital for spotting errors or unexpected behaviors early on. -

Monitor Performance

Keep a close eye on the speed and efficiency of your crawler. Use logging mechanisms to capture errors and performance metrics, which will help you evaluate its operational effectiveness. Companies leveraging Websets' AI-driven platform have reported significant improvements in their prospect generation capabilities through diligent monitoring and optimization strategies. -

Optimize Data Collection

Tailor the crawling frequency and depth to fit the website's structure and your specific data needs. Implement rate limiting to avoid server overload, which can lead to costly serverless bills and diminished performance. Websets' platform provides features like intelligent scheduling and adaptive crawling strategies that enhance efficiency. Statistics indicate that well-optimized crawlers can retrieve 100-200 pages per second, underscoring the importance of efficiency in information collection. Websets' tools can streamline this process by delivering detailed information about prospects, ensuring your efforts yield high-quality outcomes. -

Refine Data Parsing

Regularly review and enhance your data extraction logic to ensure accuracy. Adjust parsing rules to capture all relevant information, which is essential for generating high-quality leads. Utilizing Websets' AI capabilities can simplify this process, enabling more precise information extraction tailored to your needs. -

Implement Feedback Loops

Leverage the data collected to refine your crawling strategy. Analyze results to pinpoint areas for improvement, ensuring your system evolves to meet changing data requirements. By incorporating insights from Websets' case studies, you can adopt best practices that have proven effective in enhancing prospect generation and recruitment efforts.

By consistently testing and optimizing your web spider, you can significantly boost its effectiveness, ultimately driving better results in your prospect generation efforts. Organizations that prioritize optimization for search engine bots have seen remarkable enhancements in their lead generation capabilities, as evidenced by case studies showcasing effective applications of rate limiting and caching techniques. Moreover, optimizing crawlers contributes to sustainability by reducing unnecessary bot traffic, aligning with current trends in web crawling.

Conclusion

Building a web crawler in 2025 offers sales leaders a powerful opportunity to leverage data-driven insights. Understanding the purpose and functionality of web crawlers enables businesses to gather and analyze information that drives strategic decision-making. This guide has illuminated the path from conceptualization to implementation, ensuring that sales teams can effectively use these tools to enhance lead generation and market research.

Key insights discussed throughout this article highlight the essential functions of web crawlers - data collection, indexing, and link following - alongside the critical tools and technologies necessary for successful implementation. The outlined steps for designing, implementing, and optimizing a web crawler provide a comprehensive framework that empowers sales professionals to navigate the complexities of data extraction while adhering to ethical standards in compliance with web regulations.

In a rapidly evolving digital landscape, effectively utilizing web crawlers is not just an advantage; it’s a necessity for any sales-driven organization. By adopting these best practices and continually refining their strategies, sales leaders can ensure their teams remain competitive and informed. Embracing the potential of web scraping tools will not only enhance prospect generation but also foster a culture of data-driven decision-making that propels businesses toward success.

Frequently Asked Questions

What are web crawlers and what is their primary purpose?

Web crawlers, also known as spiders or bots, are automated programs that systematically browse the internet to collect information from web pages. Their primary purpose is to index content for search engines.

What are the key functions of web crawlers?

The key functions of web crawlers include data collection, indexing, following links, and respecting robots.txt guidelines. They gather information from websites, organize it for easy retrieval, navigate through hyperlinks to discover new content, and adhere to ethical guidelines regarding access to web pages.

How do web crawlers contribute to market research?

Web crawlers significantly impact market research by providing valuable insights and competitor tracking. In 2024, 73% of companies used web scraping for these purposes, highlighting its importance in data-driven decision-making.

What is the projected growth of the web scraping market?

The web scraping market is projected to grow at a compound annual growth rate (CAGR) of 14.2%, reaching USD 2.00 billion by 2030.

How are web crawlers utilized by sales teams?

Sales teams rely on web crawlers to enhance lead generation strategies and improve market research capabilities. For example, 67% of U.S. investment advisers use web scraping in their alternative data programs to inform trading strategies.

In which industries are web crawlers particularly effective?

Web crawlers are effective across various sectors, including e-commerce and finance, where they contribute to competitive intelligence and customer analytics.