Introduction

Mastering web scraping is no longer optional for developers aiming to extract valuable data from the vast expanse of the internet. At the core of this essential process is cURL, a powerful command-line tool that, when paired with Python, unlocks a realm of possibilities for efficient data gathering. This article explores the intricacies of using cURL with Python, providing a step-by-step guide that simplifies setup and addresses common challenges in web scraping. How can developers harness this potent combination to elevate their data extraction strategies while navigating the complexities of today’s web environments?

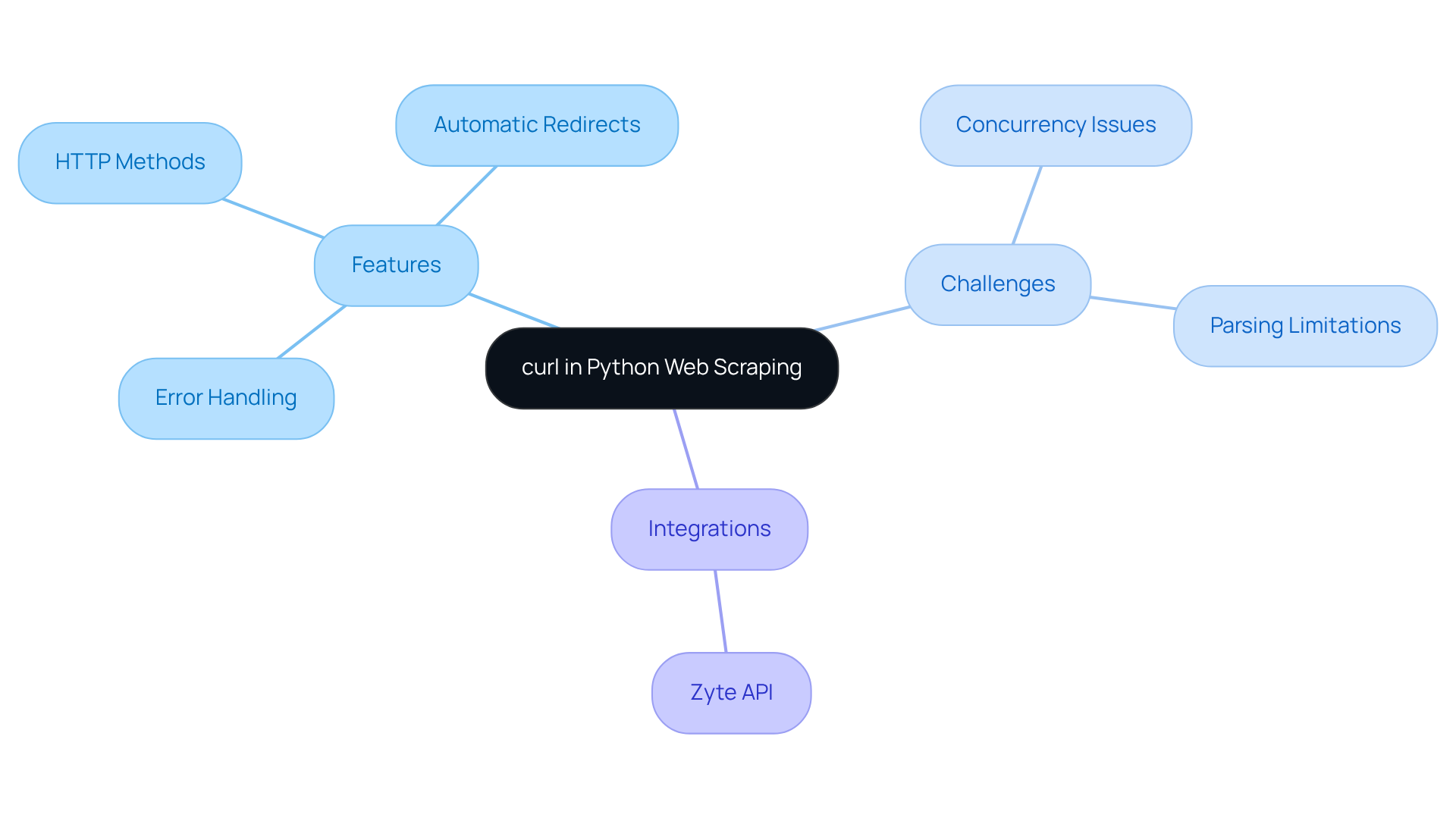

Understand curl and Its Role in Python Web Scraping

Client URL' is a powerful command-line tool designed for transferring information to and from servers using various protocols, including HTTP and HTTPS. In the realm of Python web data extraction, this tool is indispensable for performing curl with Python for web scraping, enabling requests to web servers and facilitating data gathering. It allows users to send GET and POST requests, manage cookies, and customize headers, making it essential for effective web content extraction.

When paired with Python, the tool can be accessed through libraries like PycURL or by executing command-line calls via the subprocess module, facilitating curl with Python for web scraping. Mastering this tool is crucial for web data extraction, as it empowers developers to programmatically interact with web pages and gather valuable information using curl with Python for web scraping, while also automating repetitive tasks. This foundational knowledge sets the stage for applying more advanced extraction techniques in subsequent sections.

Recent advancements have enhanced the tool's functionalities, solidifying its status as a preferred choice among developers. As of 2026, approximately 14% of developers utilize a command-line tool for web extraction, underscoring its growing importance in the field. Key features include:

- Robust error handling

- Support for various HTTP methods

- Automatic redirect following

All of which streamline the data extraction process. Additionally, the command-line tool can retry requests in the event of temporary errors by using the --retry flag, ensuring reliable data collection.

However, it's important to recognize that the tool faces challenges with large-scale web extraction tasks due to its lack of built-in concurrency and throttling features. Furthermore, it does not natively support parsing and extracting structured information from HTML, XML, or JSON, which limits its effectiveness in more complex extraction scenarios. Experts assert that effectively utilizing the command-line tool can significantly enhance data collection strategies. Integrating it with the Zyte API can also help mitigate issues related to anti-bot systems and IP bans. As the web scraping landscape evolves, understanding the tool's capabilities will be vital for anyone aiming to excel in this domain.

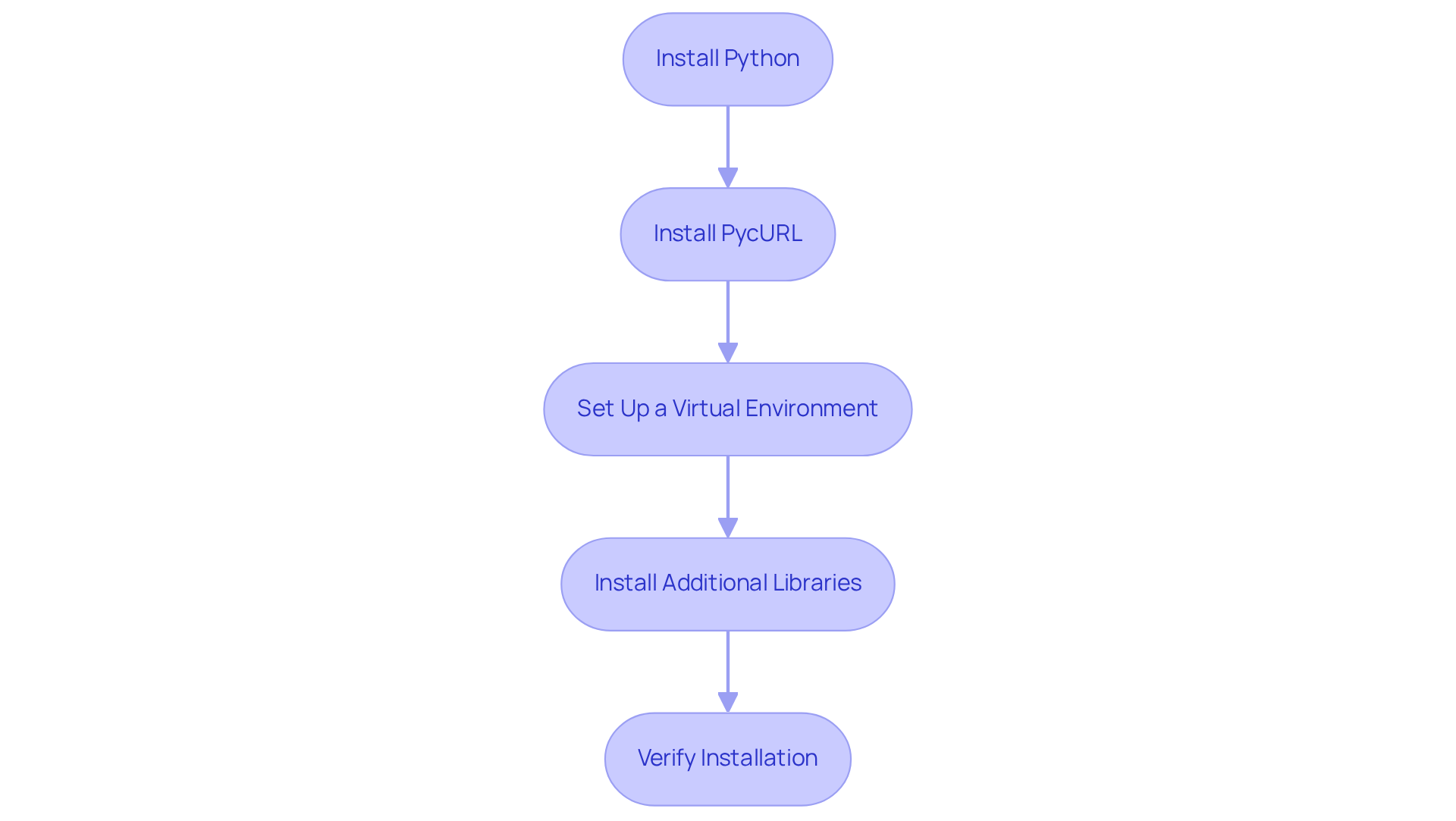

Set Up Your Environment for curl in Python

To set up your environment for using cURL in Python, follow these essential steps:

-

Install Python: First and foremost, ensure you have Python installed on your system. You can easily download it from the official Python website.

-

Install PycURL: Next, you'll want to install PycURL, a powerful Python interface to the URL library. Simply run the following command in your terminal or command prompt:

pip install pycurlIf you run into any issues, double-check that the cURL library is also installed on your system. PycURL is favored for its efficiency and control over HTTP requests, making it an invaluable tool for developers tackling complex data extraction tasks.

-

Set Up a Virtual Environment (Optional): Creating a virtual environment for your projects is a best practice that can save you headaches down the line. Execute this command:

python -m venv myenvTo activate the virtual environment, use:

- On Windows:

myenv\Scripts\activate - On macOS/Linux:

source myenv/bin/activate

- On Windows:

-

Install Additional Libraries: Depending on your data extraction needs, consider installing additional libraries like Requests or BeautifulSoup for parsing HTML. Use pip to install them with:

pip install requests beautifulsoup4 -

Verify Installation: Finally, to ensure everything is set up correctly, run a simple Python script to check if PycURL is functioning:

import pycurl print('PycURL is installed and ready to use!')

By following these steps, you will establish a fully functional environment ready for web scraping with cURL in Python. This configuration aligns with current trends in Python library utilization, as the web data collection market is projected to reach USD 2.00 billion by 2030. This underscores the growing significance of data extraction across various sectors. Additionally, addressing common setup issues can significantly enhance your experience and efficiency in web extraction.

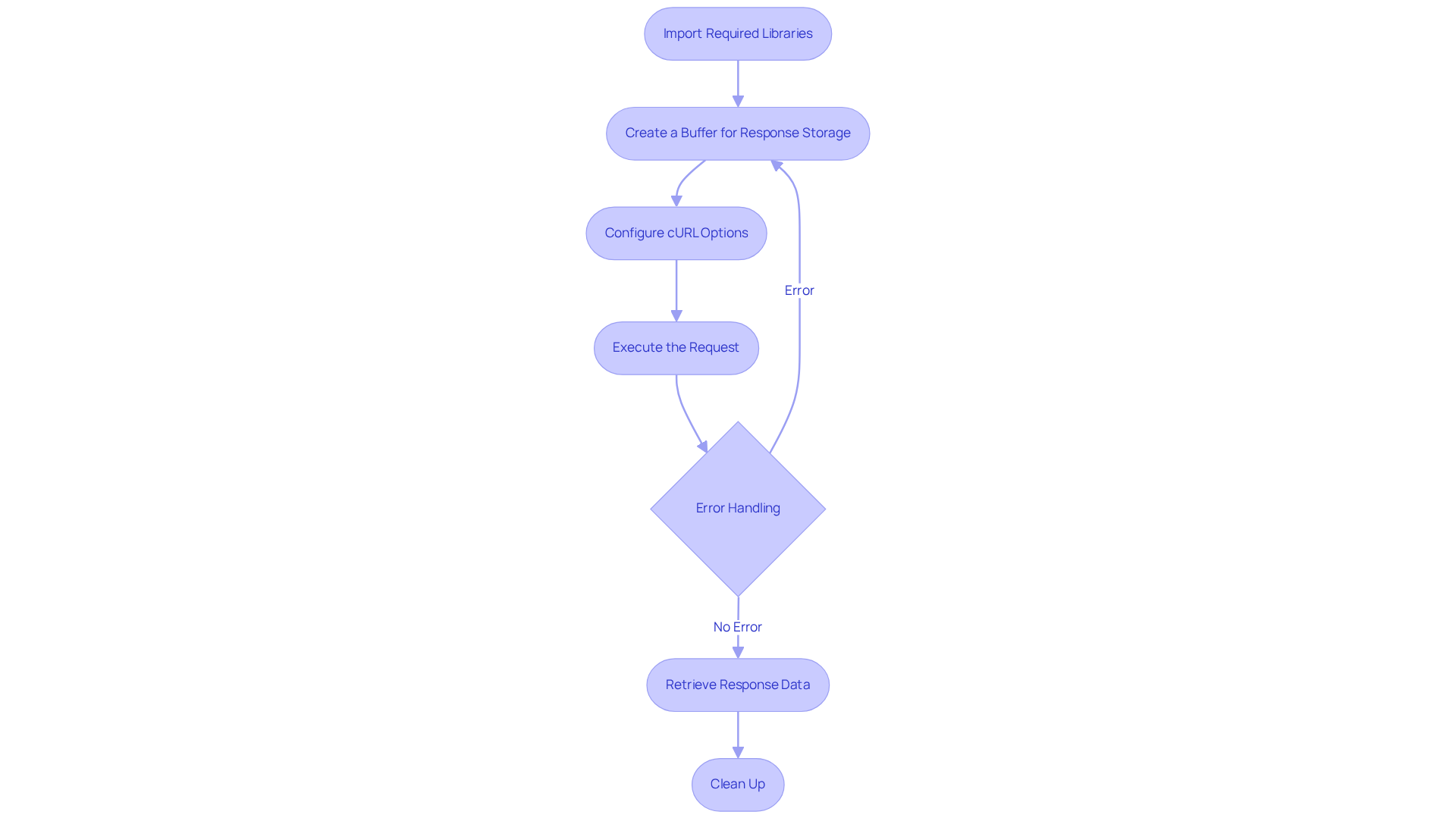

Implement Web Scraping Logic with curl in Python

To implement web scraping logic using cURL in Python, follow these essential steps:

-

Import Required Libraries: Start by importing the necessary libraries in your Python script:

import pycurl from io import BytesIO -

Create a Buffer for Response Storage: Utilize a buffer to capture the server's response:

buffer = BytesIO() -

Configure cURL Options: Initialize a cURL object and set the options for your request:

curl = pycurl.Curl() curl.setopt(curl.URL, 'http://example.com') # Replace with your target URL curl.setopt(curl.WRITEDATA, buffer) curl.setopt(curl.FOLLOWLOCATION, True) # Follow redirects -

Execute the Request: Perform the request and capture the response:

curl.perform() -

Error Handling: After execution, check for any errors:

if curl.errstr(): print('Error:', curl.errstr()) -

Retrieve Response Data: Extract the response data from the buffer:

response_data = buffer.getvalue().decode('utf-8') print(response_data) # Print or process the data as needed -

Clean Up: Finally, close the cURL object to free resources:

curl.close()

This foundational implementation enables you to scrape data from a specified URL effectively. You can enhance this logic by adding custom headers, managing cookies, or parsing the response data with libraries like BeautifulSoup. As you embark on your data extraction projects, remember to adhere to best practices. Respect robots.txt files and implement rate limiting to avoid overwhelming servers.

Web data extraction is significant today, with 10.2% of all global web traffic now coming from extractors. This statistic underscores the importance of understanding and implementing effective data extraction techniques responsibly. The industry is evolving towards a model of data partnership rather than mere extraction, emphasizing the need for ethical practices in data collection.

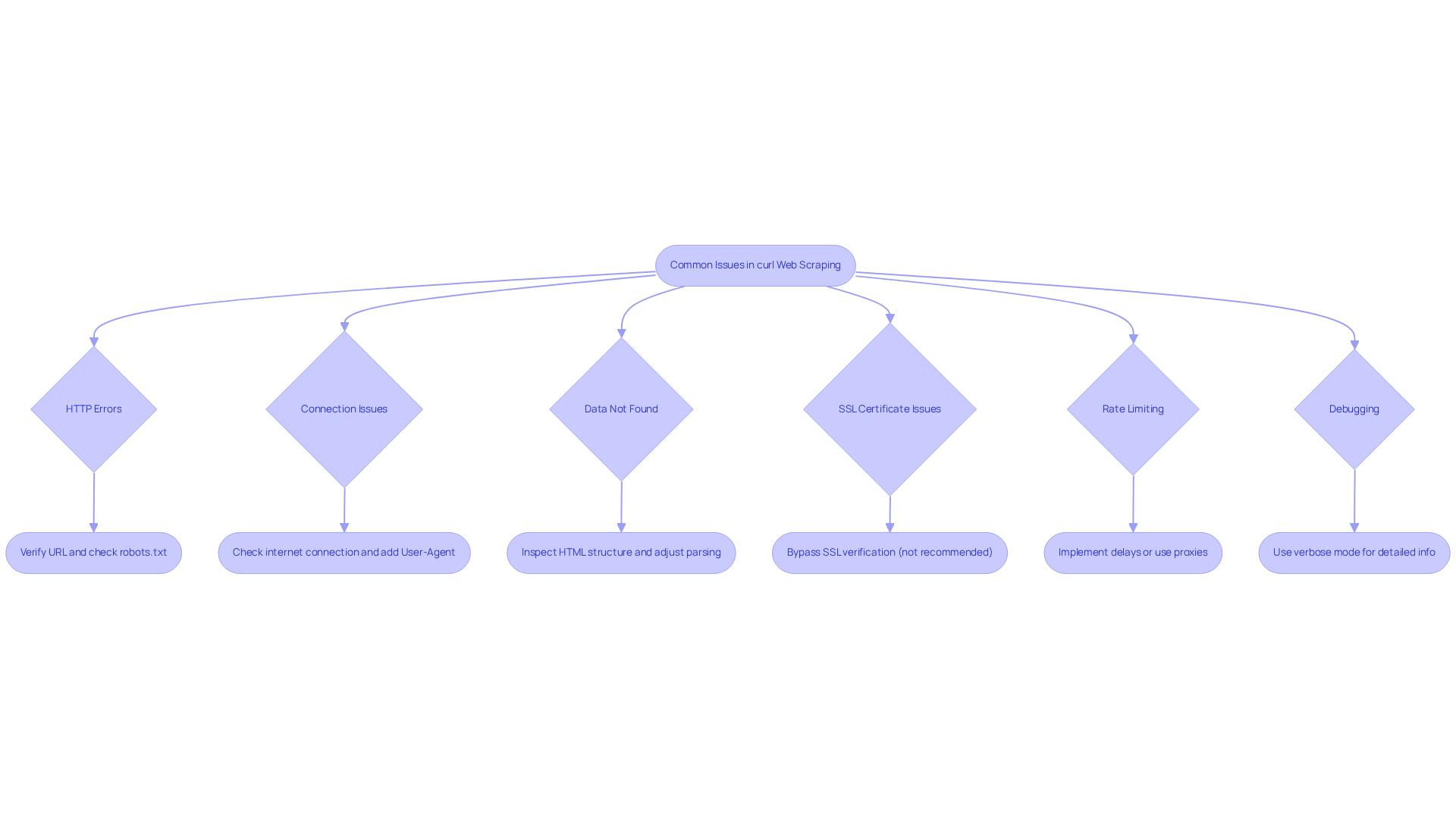

Troubleshoot Common Issues in curl Web Scraping

Using curl with Python for web scraping can be straightforward, but common issues may arise. Here are essential troubleshooting tips to resolve them effectively:

-

HTTP Errors: Encountering HTTP errors like 404 or 403? Verify the URL for accuracy and ensure the target website allows data extraction. Check the site's

robots.txtfile to understand its scraping policies. -

Connection Issues: Experiencing connection timeouts or failures? First, verify your internet connection and check if the target server is reachable. Consider adding a user-agent header to mimic a browser:

curl.setopt(curl.HTTPHEADER, ['User-Agent: Mozilla/5.0']) -

Data Not Found: If the expected data is missing from the response, ensure the website structure hasn’t changed. Use browser developer tools to inspect the HTML structure and adjust your parsing logic accordingly.

-

SSL Certificate Issues: Facing SSL certificate errors? You can bypass SSL verification (though not recommended for production) by adding:

curl.setopt(curl.SSL_VERIFYPEER, False) -

Rate Limiting: If your requests are being blocked, implement delays between requests or use proxies to distribute your requests across multiple IP addresses.

-

Debugging: Utilize the verbose mode of the tool for detailed information about the request and response. Set the option:

curl.setopt(curl.VERBOSE, True)This will help identify where things might be going wrong.

By following these troubleshooting tips, you can effectively tackle common issues that arise during curl with Python for web scraping, ensuring a smoother scraping experience.

Conclusion

Mastering curl in Python for web scraping unlocks a realm of opportunities for data extraction and automation. This guide has covered the essential aspects of utilizing curl, from its pivotal role in web scraping to setting up your environment and implementing effective scraping logic. By harnessing curl's capabilities, developers can efficiently gather data from diverse online sources, significantly enhancing their ability to extract valuable insights.

Key insights discussed include:

- The foundational setup required for using PycURL

- The implementation of scraping logic

- Troubleshooting common issues that may arise during the process

Emphasizing best practices - such as respecting web scraping policies and utilizing user-agent headers - ensures that developers navigate the complexities of web data extraction responsibly and ethically. The article also highlights the growing significance of web scraping, evidenced by its increasing adoption among developers.

As the landscape of web data extraction evolves, embracing tools like curl is crucial for anyone aiming to excel in this domain. By applying the techniques outlined in this guide, individuals can enhance their scraping capabilities and contribute to a more ethical approach to data collection. The time to master curl with Python for web scraping is now. Seize the potential of data at your fingertips and make informed decisions based on the insights you gather.

Frequently Asked Questions

What is curl and what role does it play in Python web scraping?

Curl is a command-line tool used for transferring information to and from servers using protocols like HTTP and HTTPS. In Python web scraping, it is essential for sending requests to web servers and gathering data.

How can curl be accessed in Python?

Curl can be accessed in Python through libraries like PycURL or by executing command-line calls using the subprocess module.

Why is mastering curl important for web data extraction?

Mastering curl is crucial for web data extraction as it allows developers to interact programmatically with web pages, automate tasks, and gather valuable information efficiently.

What are some key features of curl that enhance its functionality?

Key features of curl include robust error handling, support for various HTTP methods, automatic redirect following, and the ability to retry requests in case of temporary errors using the --retry flag.

What challenges does curl face in large-scale web extraction tasks?

Curl faces challenges such as a lack of built-in concurrency and throttling features, and it does not natively support parsing and extracting structured information from HTML, XML, or JSON.

How can integrating curl with the Zyte API help in web scraping?

Integrating curl with the Zyte API can help mitigate issues related to anti-bot systems and IP bans, enhancing the effectiveness of data collection strategies.

What is the significance of curl's usage among developers as of 2026?

As of 2026, approximately 14% of developers utilize a command-line tool for web extraction, indicating its growing importance in the field of web scraping.