Introduction

Web scraping stands as an essential tool for extracting valuable information from the vast expanse of the internet. JavaScript, particularly with Node.js, provides a robust framework for mastering this skill. This tutorial will equip you with the essentials of web scraping, guiding you through setting up your environment and implementing advanced techniques that significantly enhance data extraction efficiency.

However, as the digital landscape evolves, developers face the pressing challenge of scraping dynamic content. Are you prepared to navigate these complexities while adhering to ethical guidelines? Let's explore how you can stay ahead in this rapidly changing field.

Understand Web Scraping Fundamentals with JavaScript and Node.js

Web harvesting stands as a powerful automated method for extracting information from websites. By sending requests to web servers, it retrieves HTML content and parses that information to uncover valuable insights. JavaScript, especially with Node.js, emerges as a formidable choice for web scraping, thanks to its non-blocking I/O model that efficiently handles multiple requests at once.

To truly grasp web harvesting, it's essential to understand a few key concepts:

- HTTP Requests: Mastering GET and POST requests is crucial for retrieving data from web pages effectively.

- HTML Parsing: Navigating and extracting data from the Document Object Model (DOM) using libraries like Cheerio is a vital skill.

- Ethical Considerations: It's imperative to approach data extraction responsibly, respecting robots.txt files and adhering to website terms of service.

By mastering these fundamentals, you’ll be well-equipped to tackle more complex data extraction tasks. Are you ready to elevate your web scraping skills and unlock new opportunities?

Set Up Your Environment for Web Scraping Success

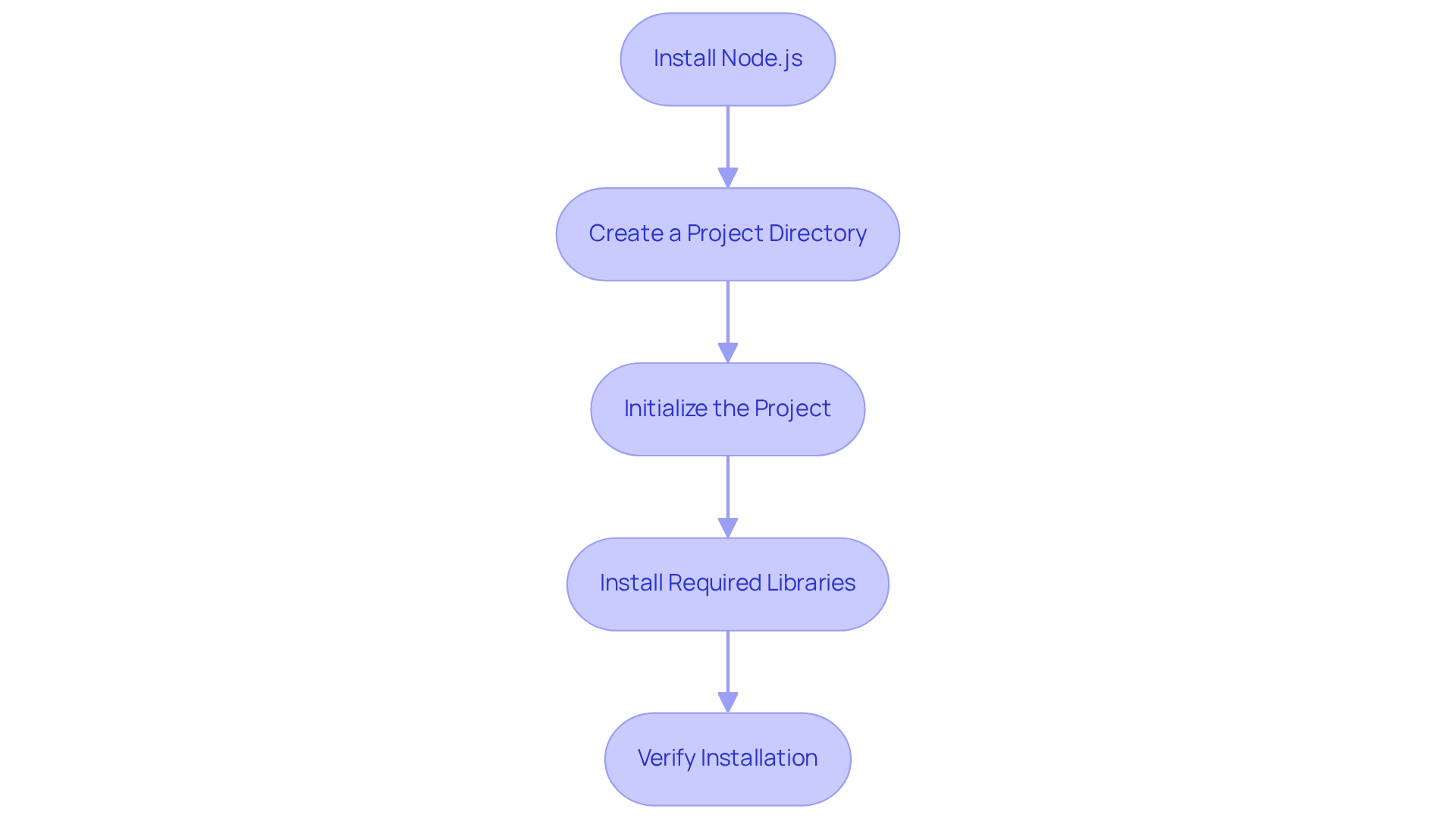

To successfully initiate web scraping with JavaScript & Node.js 2025, it’s crucial to establish your environment effectively. Here’s how to do it:

-

Install Node.js: Start by downloading and installing the latest version of Node.js from the official website. This installation includes npm (Node Package Manager), which is essential for managing your libraries. Using the latest version guarantees compatibility with modern libraries and tools.

-

Create a Project Directory: Open your terminal and create a new directory for your project:

mkdir web-scraper cd web-scraper -

Initialize the Project: Run the following command to generate a package.json file:

npm init -y -

Install Required Libraries: Install the necessary libraries for web scraping:

npm install axios cheerio puppeteer- Axios: This library is utilized for making HTTP requests.

- Cheerio: It’s employed for parsing and manipulating HTML content.

- Puppeteer: This tool is suggested for extracting data from JavaScript-rendered sites, as it automates a real browser and effectively manages dynamic content.

-

Verify Installation: Confirm that Node.js and npm are correctly installed by running:

node -v npm -v

With your environment successfully set up, you’re now ready to develop your web scraper. Remember, a well-configured environment is key to efficient data gathering. It allows you to navigate the complexities of modern web data extraction effectively. As industry specialists emphasize, "A strong environment configuration is essential for successful web data extraction, particularly as online platforms grow more dynamic and intricate.

Implement Advanced Techniques and Best Practices for Effective Scraping

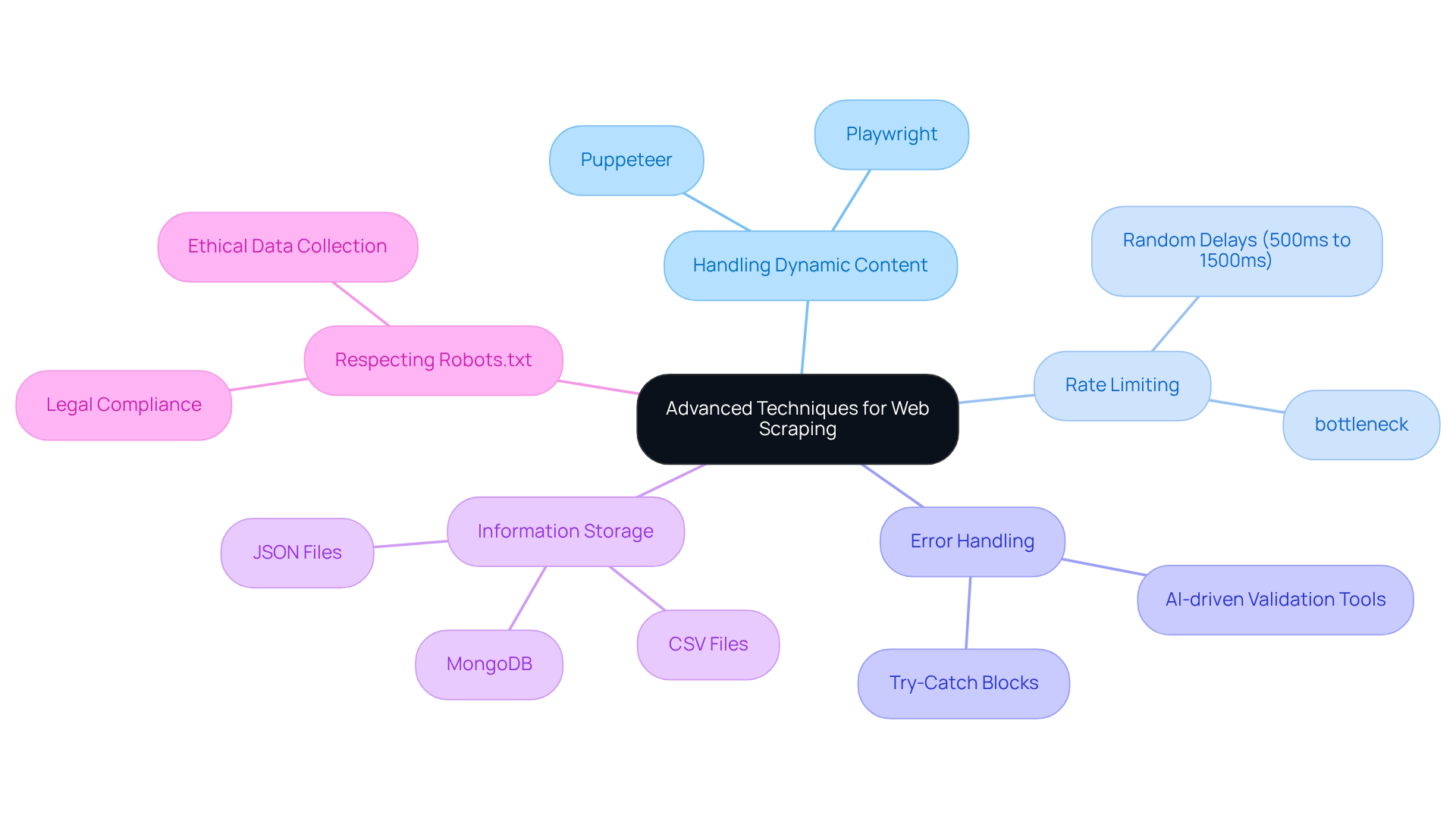

To elevate your web scraping capabilities, consider implementing these advanced techniques and best practices:

-

Handling Dynamic Content: Are you struggling with websites that rely on JavaScript for rendering content? Utilize Puppeteer or Playwright. These powerful tools allow you to control a headless browser, enabling user-like interactions with web pages. This is essential for accessing dynamically loaded data. Industry experts agree that web scraping with javascript & node.js 2025 is particularly effective, with Puppeteer excelling in handling dynamic content, executing JavaScript, and interacting with the DOM seamlessly.

-

Rate Limiting: Prevent overwhelming servers and triggering blocks by implementing strategic delays between requests. Libraries like

bottleneckcan effectively manage request rates, ensuring smoother data collection operations. Did you know that 60% of marketing teams use data extraction tools to gather insights? Maintaining a responsible extraction pace is crucial. -

Error Handling: Robust error handling mechanisms are vital. Address failed requests or unexpected changes in website structure with try-catch blocks and detailed logs for debugging. This significantly enhances the reliability of your scraping scripts. Consider using AI-driven validation tools to identify inconsistencies in information before they reach downstream systems, ensuring data integrity.

-

Information Storage: Choose the right storage method for your scraped information-be it JSON files, databases like MongoDB, or CSV files-based on your specific needs and the volume of data collected. The choice of storage can greatly impact the efficiency of data retrieval and analysis.

-

Respecting Robots.txt: Always check the robots.txt file of the target website to adhere to their data collection policies. This practice not only helps avoid legal issues but also promotes ethical data collection behavior. Specialists emphasize that following these guidelines is crucial for maintaining a positive relationship with information sources.

By implementing these techniques, you can create more efficient and dependable web extractors specifically for web scraping with javascript & node.js 2025, ensuring they consistently produce high-quality data. Position your operations for success in the ever-evolving landscape of web data extraction.

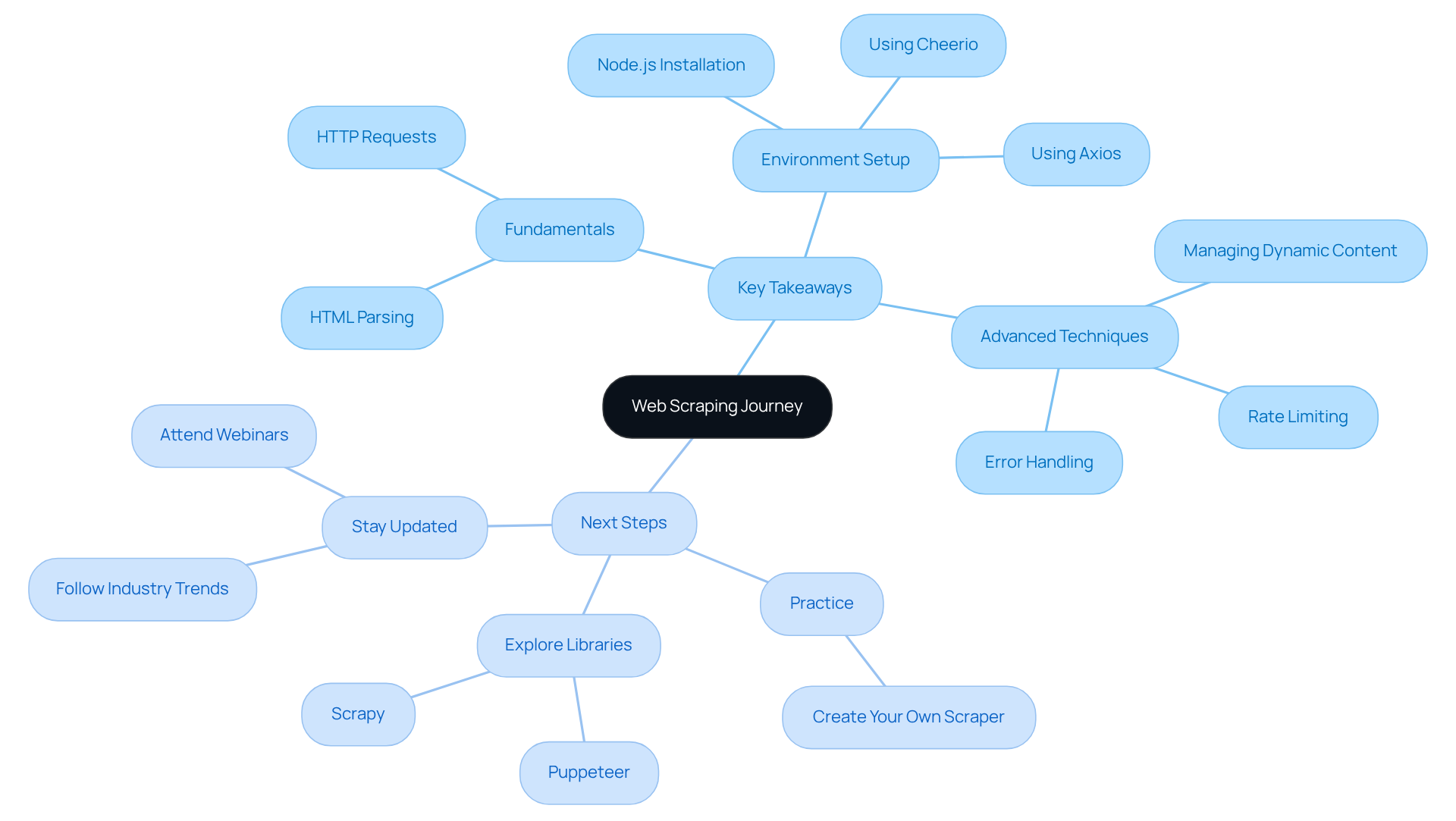

Review Key Takeaways and Next Steps in Your Web Scraping Journey

As you wrap up this tutorial on web scraping with JavaScript and Node.js, consider these essential takeaways:

- Fundamentals: Grasp the core principles of web scraping, including HTTP requests and HTML parsing.

- Environment Setup: Make sure your development environment is set up correctly with Node.js, Axios, and Cheerio.

- Advanced Techniques: Employ strategies for managing dynamic content, rate limiting, and effective error handling.

Next Steps:

- Practice: Create your own web scraper for a website that interests you, applying the techniques you've learned.

- Explore Libraries: Look into additional libraries and tools that can boost your data extraction capabilities, such as Puppeteer for headless browsing.

- Stay Updated: Keep an eye on industry trends and advancements in web extraction technologies to continually enhance your skills.

By following these steps, you’ll be well on your way to mastering web scraping with JavaScript & Node.js 2025 and using data to drive business success.

Conclusion

Mastering web scraping with JavaScript and Node.js unlocks a realm of possibilities for data extraction and analysis. This tutorial has armed you with essential skills, from grasping the core principles of web scraping to establishing an efficient working environment. By harnessing the power of JavaScript alongside Node.js, you can adeptly navigate the complexities of modern web data extraction.

Key insights include:

- The critical nature of mastering HTTP requests and HTML parsing

- The necessity of a well-configured environment using tools like Axios, Cheerio, and Puppeteer

- Advanced techniques such as managing dynamic content, implementing rate limiting, and ensuring robust error handling, which are vital for crafting reliable web scrapers

- Ethical considerations, like respecting robots.txt files, underscore the importance of responsible data collection practices

As the web scraping landscape evolves, staying informed about the latest trends and tools is essential. Engaging in practical exercises, exploring additional libraries, and keeping up with industry advancements will sharpen your skills and enhance your data extraction strategies. Embrace the journey of mastering web scraping with JavaScript and Node.js. Leverage the power of data to drive informed decisions and achieve business success.

Frequently Asked Questions

What is web scraping?

Web scraping is an automated method for extracting information from websites by sending requests to web servers to retrieve HTML content and parse that information for insights.

Why is JavaScript, particularly with Node.js, a good choice for web scraping?

JavaScript, especially with Node.js, is a strong choice for web scraping due to its non-blocking I/O model, which allows it to efficiently handle multiple requests simultaneously.

What are the key concepts to understand for effective web scraping?

Key concepts include mastering HTTP requests (GET and POST), HTML parsing using libraries like Cheerio, and understanding ethical considerations such as respecting robots.txt files and website terms of service.

What types of HTTP requests are important for web scraping?

Mastering GET and POST requests is crucial for effectively retrieving data from web pages.

What role does HTML parsing play in web scraping?

HTML parsing involves navigating and extracting data from the Document Object Model (DOM), which is essential for obtaining the desired information from web pages.

What ethical considerations should be taken into account when web scraping?

It is important to approach data extraction responsibly by respecting robots.txt files and adhering to the terms of service of the websites being scraped.