Introduction

In today's data-driven marketplace, extracting valuable insights from the web is essential for sales teams striving to stay ahead. With a multitude of web scraper tools at their disposal, businesses have unprecedented opportunities to enhance lead generation and data collection strategies. But with so many options emerging, how can organizations identify the tools that will deliver the most effective solutions tailored to their unique needs in 2025?

This article explores ten free web scraper tools designed to empower sales teams. We’ll reveal their features and benefits, demonstrating how these tools can transform data extraction efforts into a competitive advantage.

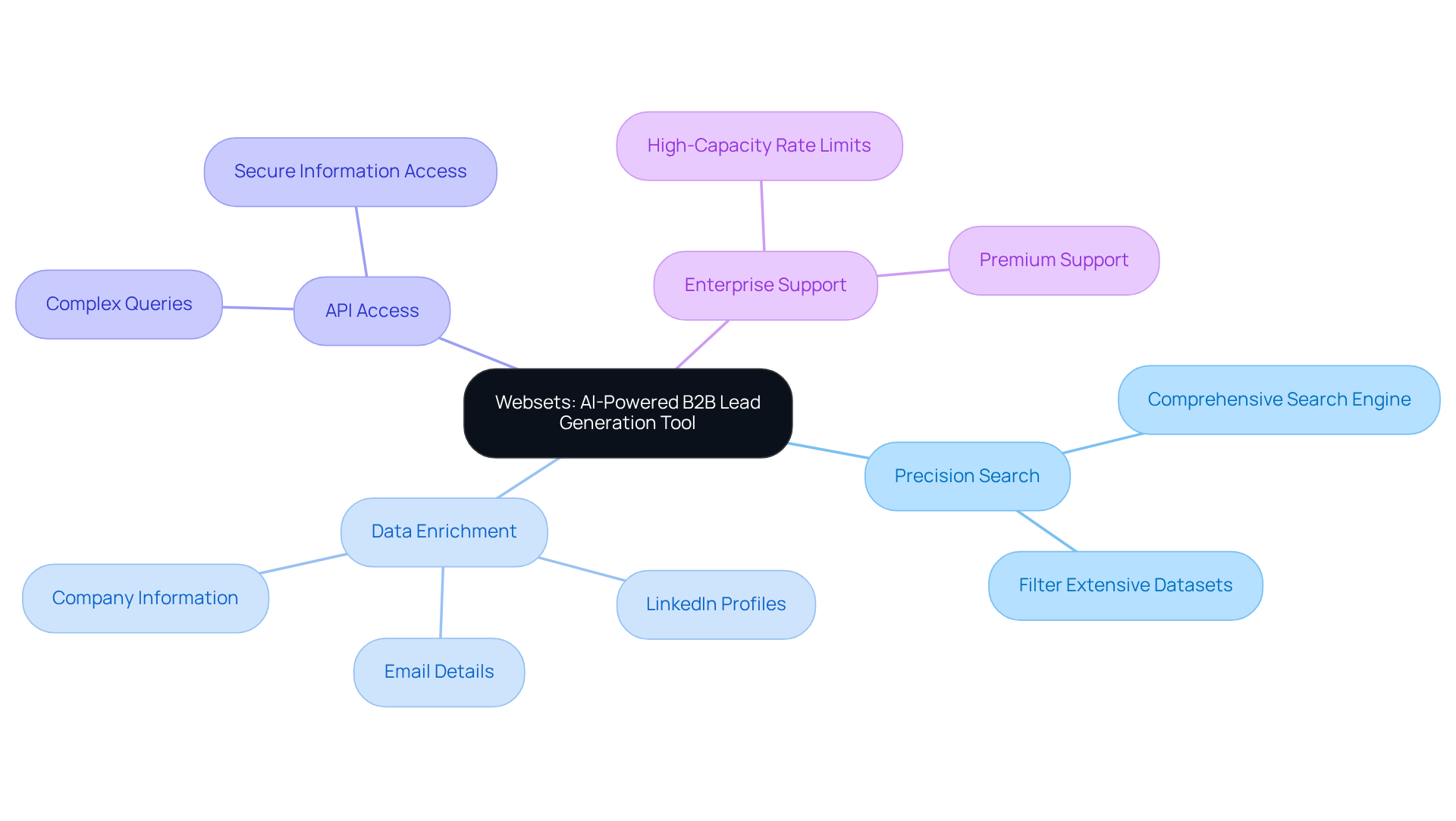

Websets: AI-Powered B2B Lead Generation Tool

Websets stands out as an innovative AI-driven platform specializing in B2B lead generation and candidate discovery. By harnessing advanced algorithms, it empowers businesses to find and connect with the right professionals or organizations effectively.

Imagine having a comprehensive search engine at your fingertips, designed for precision search solutions. Users can filter extensive datasets and enrich their search results with detailed information, including LinkedIn profiles, emails, and company details. This tool is particularly advantageous for sales teams looking to optimize their lead generation processes and enhance operational efficiency.

With an API crafted for complex queries, Websets ensures that users can access the most relevant information quickly and securely. Are you ready to elevate your lead generation strategy?

Moreover, the platform offers flexible high-capacity rate limits and premium support tailored for enterprise needs. This makes Websets an essential resource for effective market research and data enrichment. Don't miss out on the opportunity to transform your approach to lead generation.

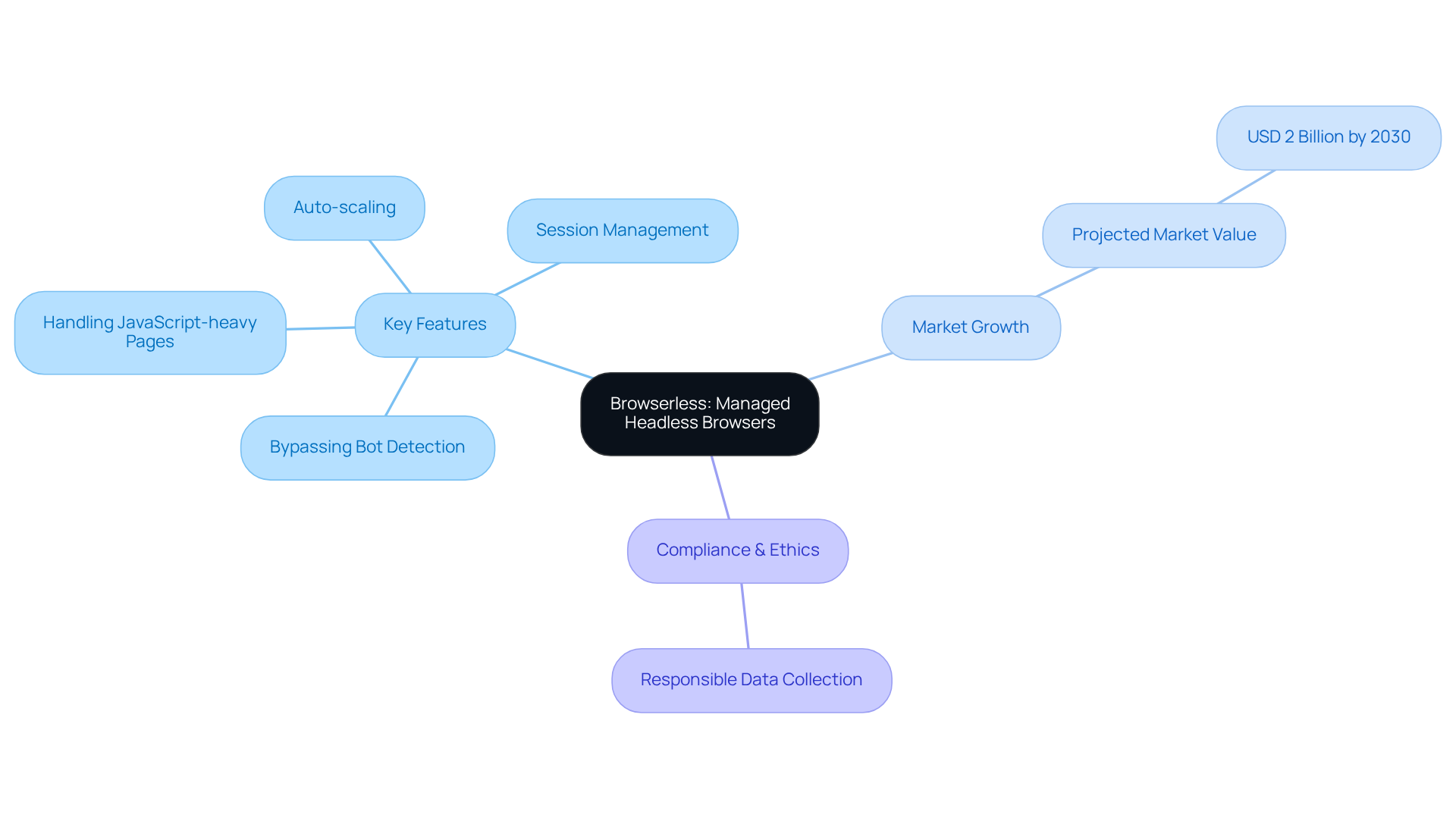

Browserless: Managed Headless Browsers for Complex Sites

Browserless revolutionizes data extraction from complex websites with its managed headless browsers. By effectively bypassing bot detection and expertly handling JavaScript-heavy pages, Browserless empowers developers to automate web harvesting tasks effortlessly. Key features like auto-scaling and session management not only enhance operational efficiency but also allow teams to focus on extracting valuable information instead of managing infrastructure.

As the web data extraction landscape evolves, the global market is projected to exceed USD 2 billion by 2030. This growth underscores the increasing demand for solutions like Browserless. Developers are recognizing the significant advantages of navigating complex web environments with Browserless, reflecting a broader trend toward automation and efficiency in information collection.

Moreover, as compliance and ethical considerations take center stage in web data extraction practices, Browserless emerges as a responsible choice for modern data collection strategies. Are you ready to elevate your data extraction efforts? Embrace the future with Browserless and streamline your web harvesting tasks today.

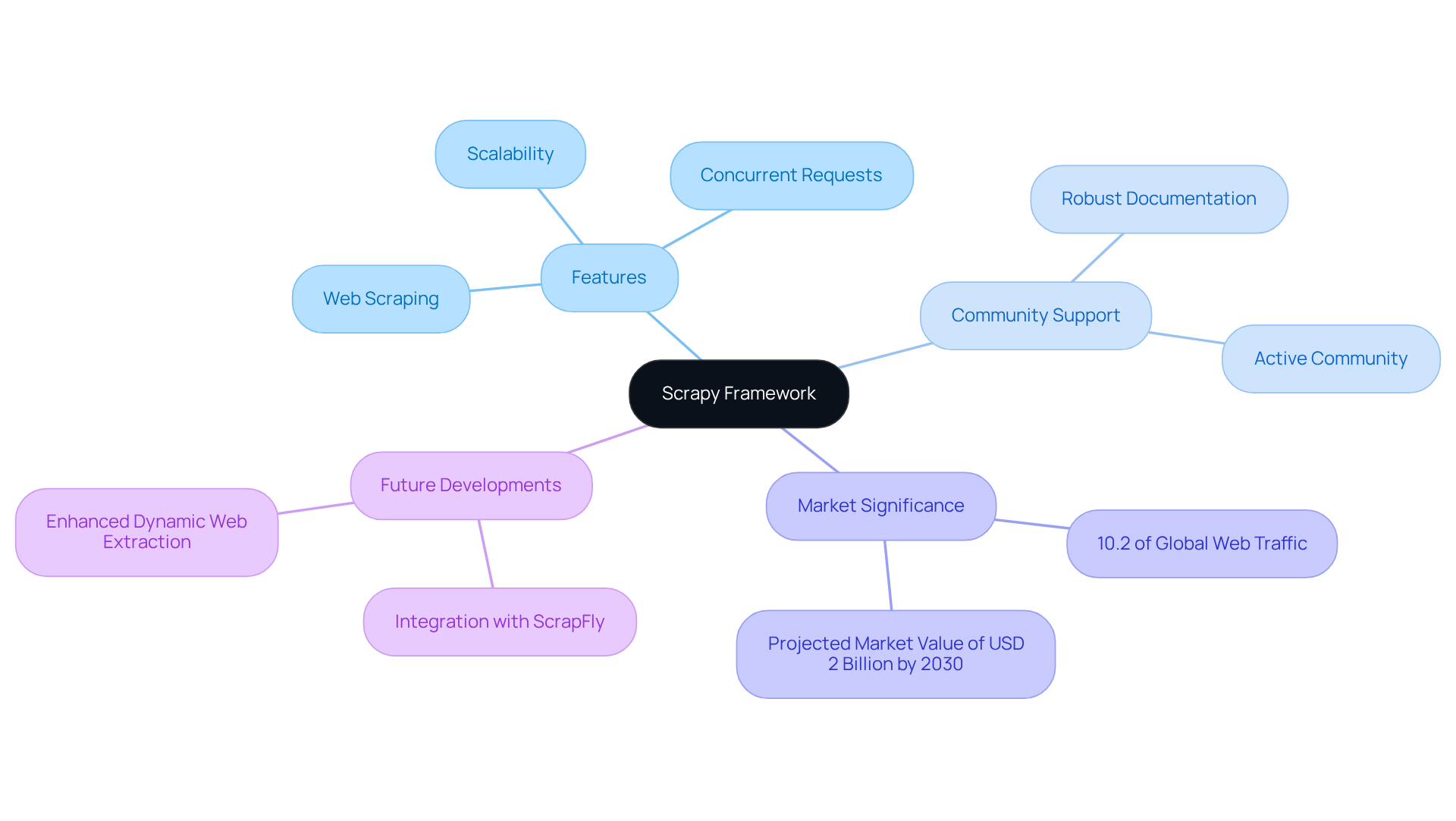

Scrapy: Battle-Tested Python Framework for Data Extraction

Scrapy stands out as a powerful open-source framework tailored for web scraping and information extraction. It empowers users to set specific rules for efficiently gathering the information they require. Backed by a robust community and comprehensive documentation, Scrapy is particularly advantageous for developers looking to build scalable web scrapers. Its ability to manage multiple requests concurrently makes it a favored choice among data scientists and developers alike.

As we look ahead to 2026, Scrapy continues to evolve, with updates enhancing its capabilities for dynamic web extraction and integration with advanced tools like ScrapFly for JavaScript rendering. Successful projects leveraging Scrapy have showcased its effectiveness across various sectors, from e-commerce to market research, underscoring its versatility and reliability in extracting valuable insights from the web.

Consider this: with 10.2% of global web traffic attributed to scrapers, the significance of web extraction in today’s digital landscape is undeniable. F5 Labs recognizes Scrapy as the leading web data extraction framework, reinforcing its credibility and effectiveness. The web data extraction market is projected to soar to USD 2 billion by 2030, highlighting the growing importance of tools like Scrapy in our data-driven economy. Are you ready to harness the power of Scrapy for your projects?

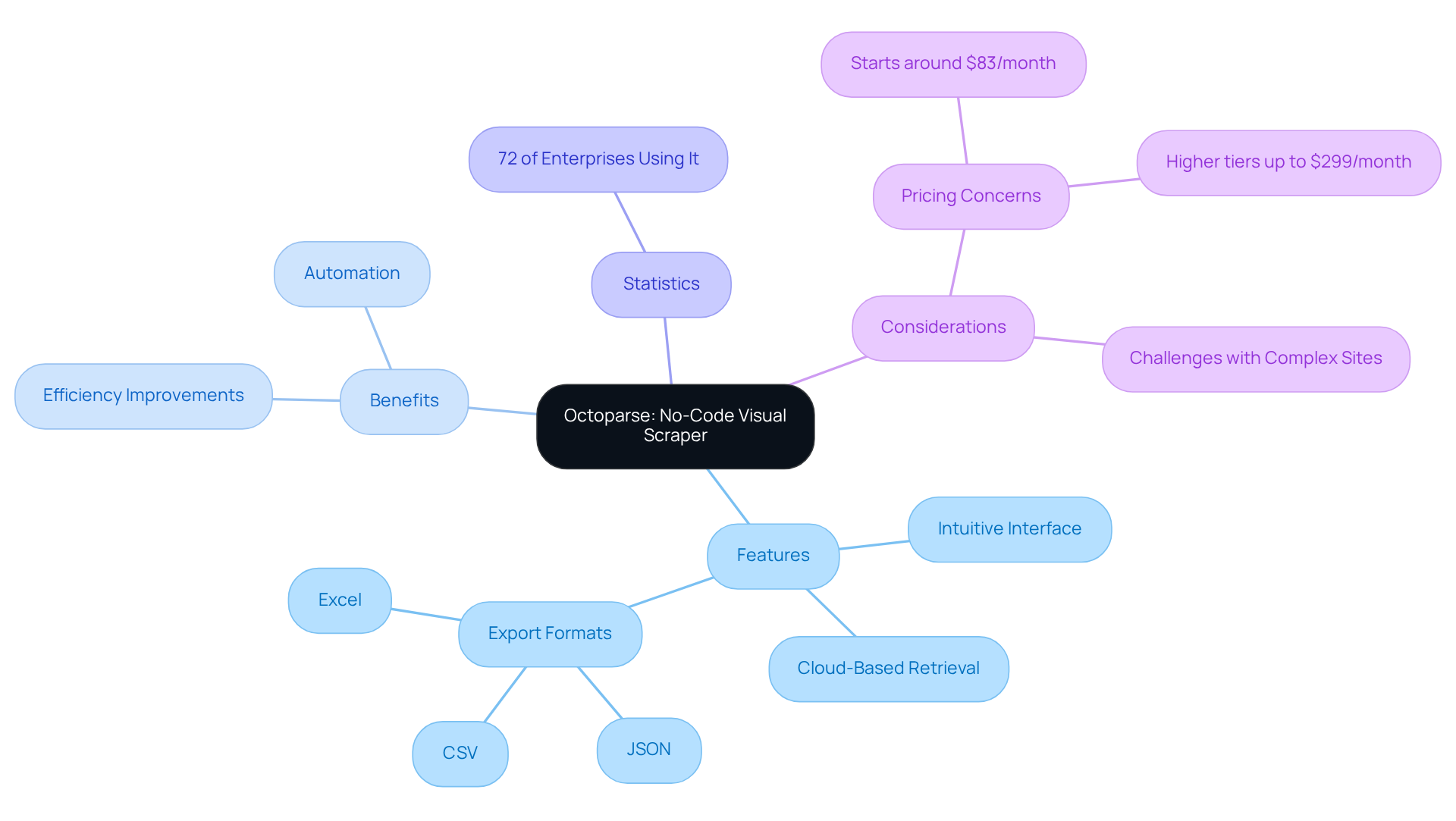

Octoparse: No-Code Visual Scraper for Easy Data Extraction

Octoparse stands out as a leading no-code web extraction tool, empowering users to effortlessly gather information from websites, regardless of their programming skills. Its intuitive visual interface allows users to simply click on elements to define their data extraction workflows, making it accessible to all. With cloud-based retrieval capabilities and options for scheduled harvesting, Octoparse is particularly beneficial for companies aiming to automate their information-gathering processes.

In 2026, the demand for user-friendly tools like Octoparse is clear: 72% of mid-to-large enterprises are leveraging web data extraction for competitive monitoring. This enables teams to streamline operations and boost productivity without needing technical expertise. Firms that have adopted Octoparse report significant improvements in their information collection efficiency, underscoring the powerful impact of no-code solutions in the extraction landscape. As Kanhasoft aptly states, "data extraction is no longer a secret weapon; it’s standard artillery."

Moreover, Octoparse supports exports in various formats, including:

- JSON

- CSV

- Excel

It also offers hundreds of preset templates for popular sites, making it a versatile choice for users. However, potential users should be aware that Octoparse may encounter challenges with complex sites, and its pricing can escalate with increased usage. These factors are crucial considerations for anyone looking to enhance their data extraction capabilities.

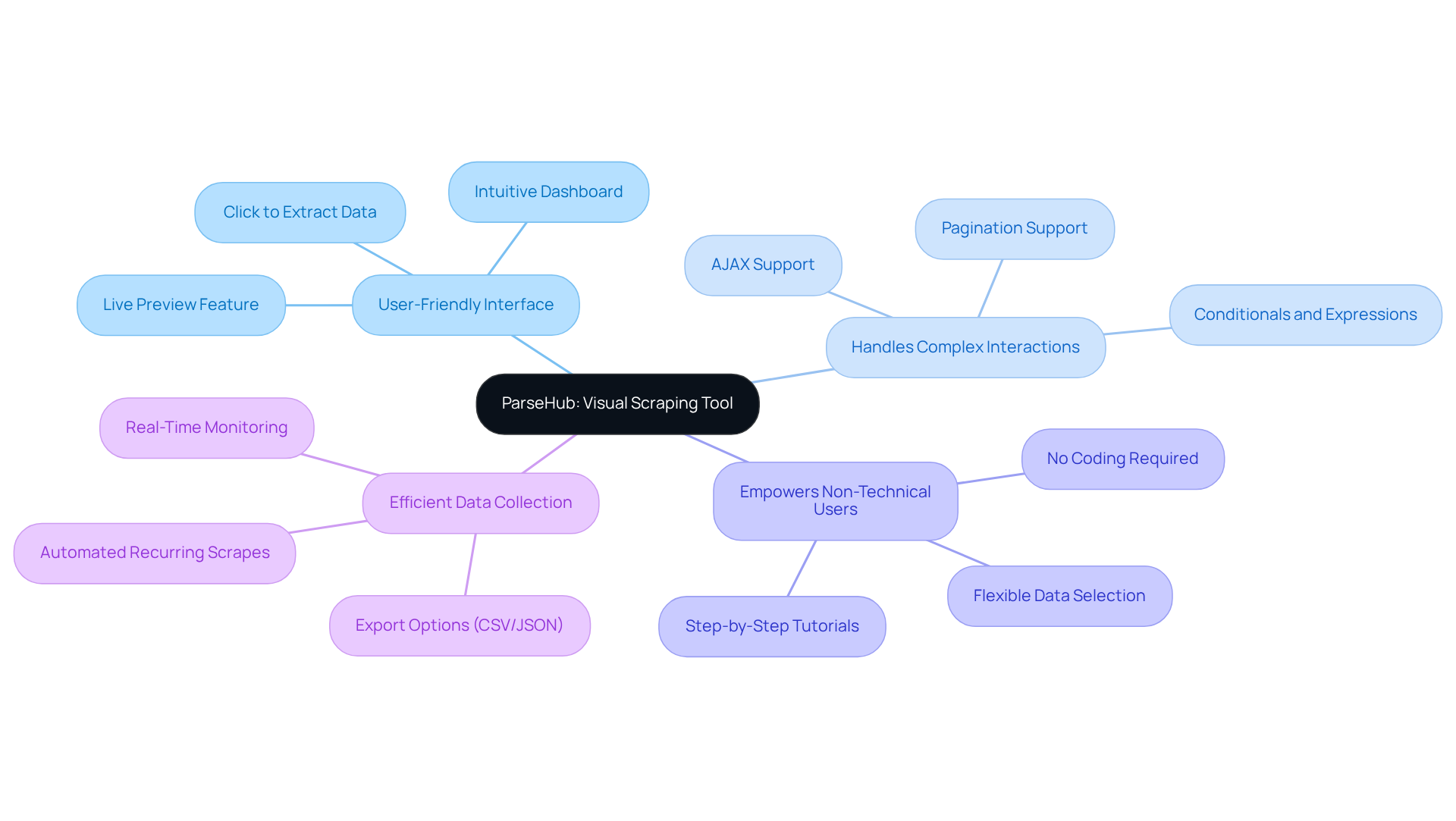

ParseHub: Visual Scraping Tool for Dynamic Websites

ParseHub stands out as a powerful visual extraction tool designed specifically for gathering information from dynamic websites. With its intuitive interface, users can effortlessly click on the data they wish to extract, making it an ideal choice for those without technical expertise.

What sets ParseHub apart is its ability to handle complex interactions, including pagination and AJAX requests. This capability makes it particularly suitable for extracting information from modern web applications, where traditional methods often fall short.

Imagine being able to streamline your data collection process without the need for coding skills. ParseHub empowers users to efficiently gather the information they need, transforming the way businesses approach data extraction.

In a world where data drives decisions, having the right tools at your disposal is crucial. Don’t miss out on the opportunity to enhance your data extraction capabilities with ParseHub.

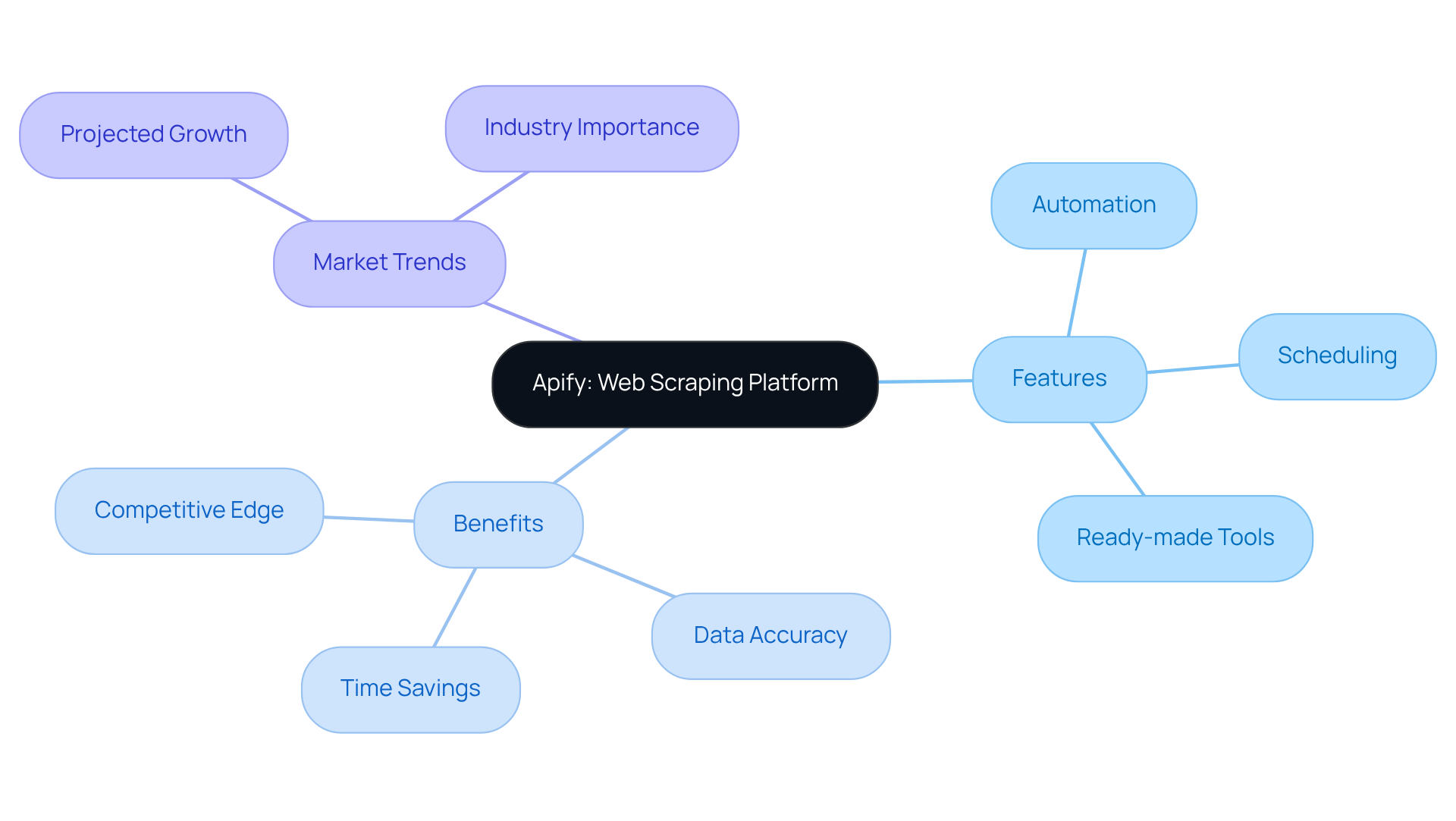

Apify: Comprehensive Scraping Platform with Scheduling Features

Apify stands out as a leading web scraping platform, expertly automating information extraction and browser automation. With a diverse array of ready-made tools and templates, it empowers users to efficiently gather data from various websites. One of its standout features is the scheduling capability, which allows businesses to automate their information collection processes, ensuring they always have the latest details at their fingertips.

As we look ahead to 2026, the trend toward automated information extraction is gaining significant traction, with Apify leading the charge. Its intuitive scheduling features are designed to meet the evolving demands of businesses. Companies leveraging Apify for scheduled information collection have reported remarkable improvements in their ability to monitor market trends and competitor activities in real time. For instance, e-commerce businesses are using Apify to track pricing changes and product availability, helping them stay competitive in a rapidly changing market.

Organizations are increasingly recognizing the importance of timely information, making Apify's scheduling features essential. Users have found that automating information extraction not only saves time but also enhances the accuracy of the data collected. This positions Apify as a crucial tool for sales teams looking to elevate their performance through informed strategies and insights. Additionally, Websets' AI-powered tools further enhance Apify's capabilities, providing improved solutions for B2B lead generation and information analysis.

According to industry analyses, the global web extraction market is projected to surpass $9 billion by the end of 2025. This underscores the growing importance of platforms like Apify in today’s data-driven landscape. Are you ready to harness the power of automated information extraction and stay ahead of the competition?

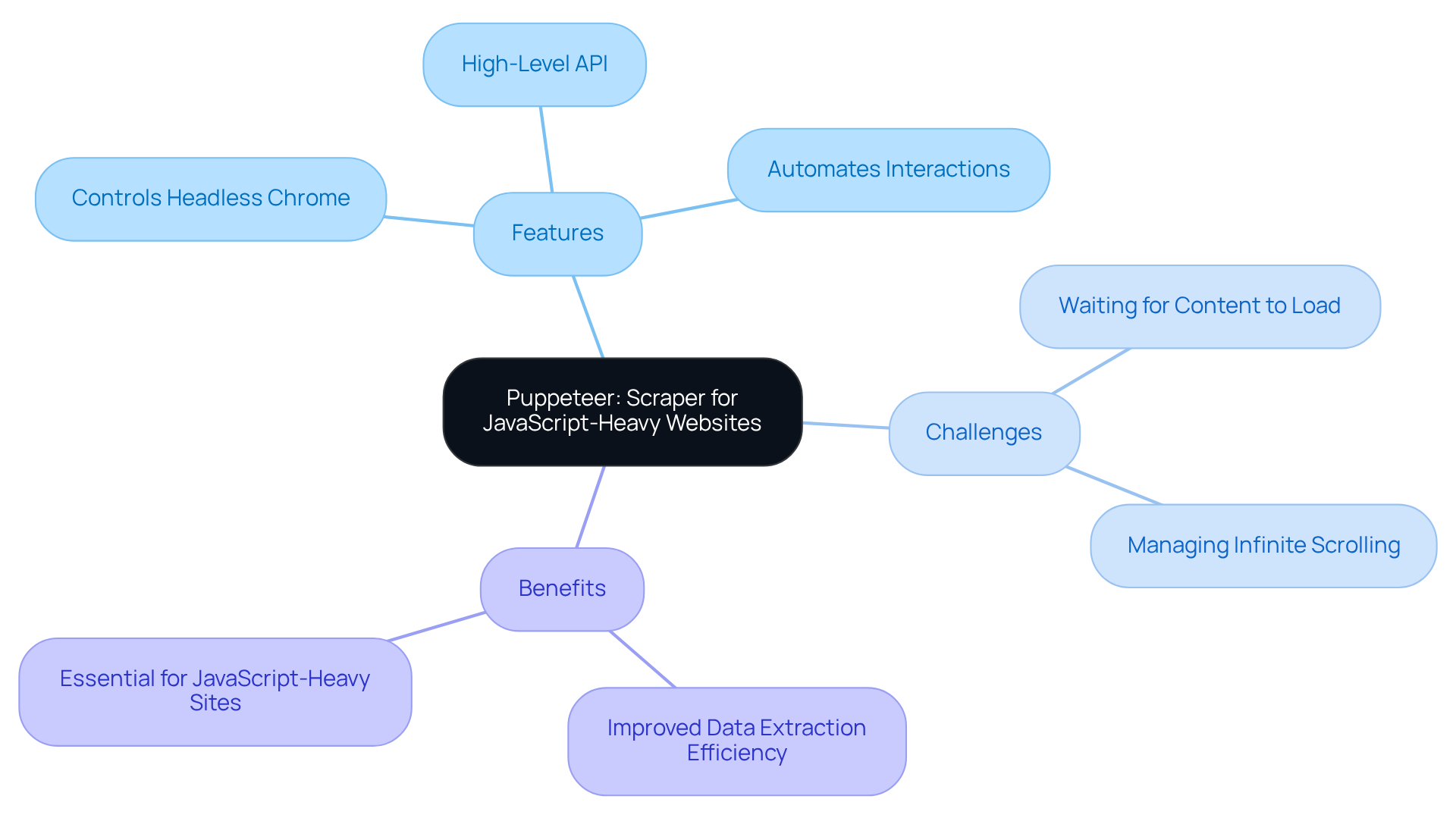

Puppeteer: Scraper for JavaScript-Heavy Websites

Puppeteer stands out as a formidable Node.js library crafted by Google, specifically designed to control headless Chrome or Chromium via a high-level API. Its true power lies in its ability to scrape JavaScript-heavy websites, enabling developers to automate interactions and extract information with remarkable precision. By rendering pages just like a conventional browser, Puppeteer simplifies data extraction from complex web applications, establishing itself as an indispensable tool for modern web harvesting.

As businesses increasingly navigate the complexities of dynamic web content, the reliance on headless browser automation is on the rise. Developers are turning to Puppeteer to tackle intricate navigation challenges, such as:

- Waiting for content to load

- Managing infinite scrolling

These are common hurdles in web data extraction.

Consider this: many developers have reported significant improvements in their data extraction efficiency when utilizing Puppeteer. One developer noted, "Puppeteer streamlines the process of scraping by imitating user behavior, which is essential for retrieving information on sites that heavily depend on JavaScript." This perspective highlights a broader trend where headless Chrome is emerging as the go-to solution for information-gathering tasks, particularly in sectors like e-commerce and finance, where real-time insights are crucial for maintaining a competitive edge.

Incorporating Puppeteer into your toolkit could revolutionize your approach to web data extraction. Are you ready to enhance your efficiency and stay ahead in your industry?

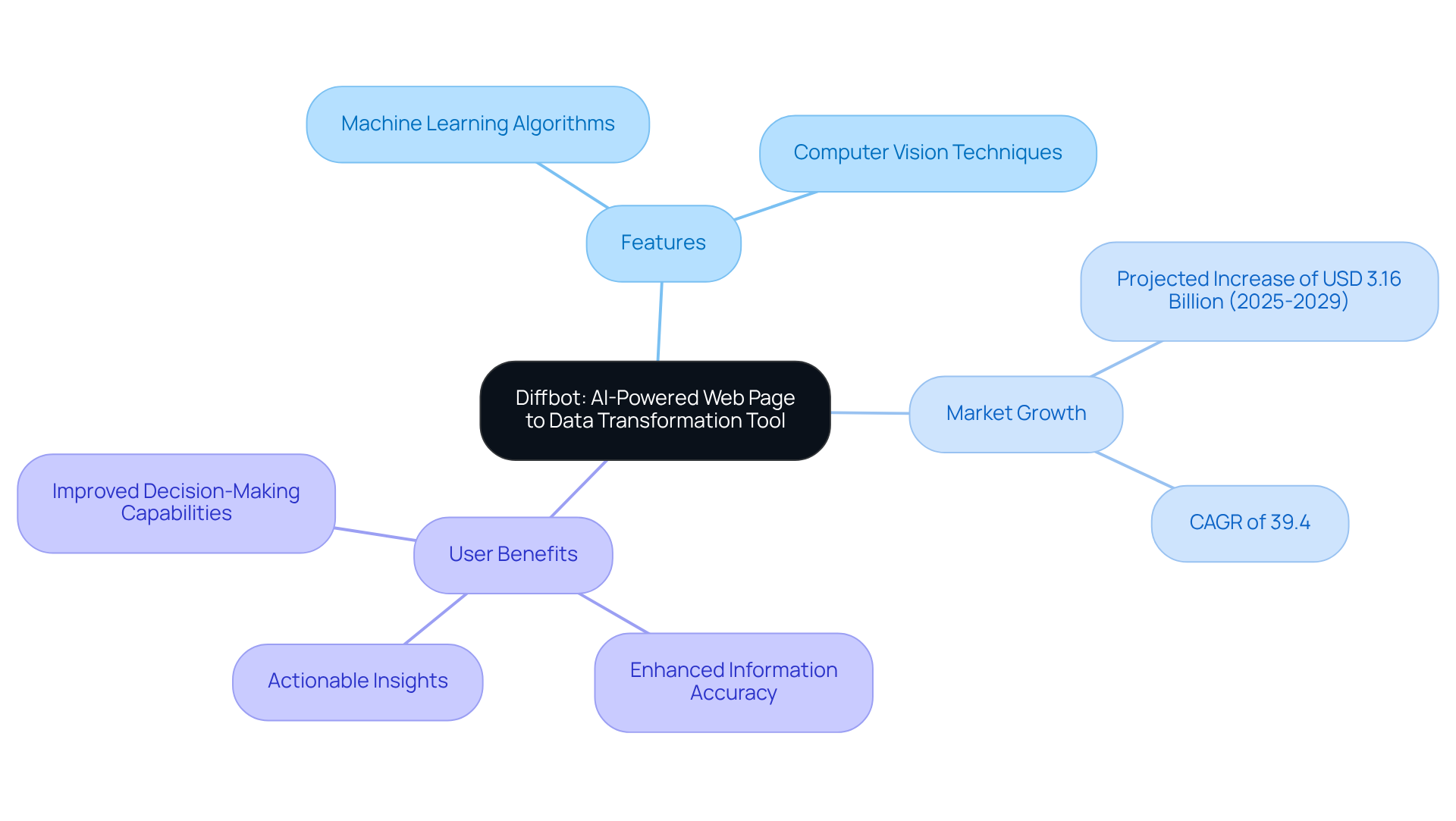

Diffbot: AI-Powered Web Page to Data Transformation Tool

Diffbot stands at the forefront of AI-driven tools designed for web information retrieval, expertly transforming web pages into organized content. By harnessing advanced machine learning algorithms and computer vision techniques, Diffbot automates the gathering process, enabling users to effortlessly extract insights from unstructured web content. This capability is particularly beneficial for companies seeking to leverage AI for a competitive edge, facilitating large-scale information gathering with remarkable efficiency.

As the AI-driven web extraction market is projected to grow significantly, with an anticipated increase of USD 3.16 billion from 2025 to 2029, tools like Diffbot are becoming essential for organizations aiming to refine their information strategies. Industry experts highlight that integrating machine learning into web harvesting not only enhances information accuracy but also simplifies the extraction process, making it accessible to a broader range of users.

Consider this: companies utilizing Diffbot have successfully converted their web information into actionable insights, thereby boosting their market intelligence and decision-making capabilities. Are you ready to transform your data into a strategic asset? Embrace the power of Diffbot and elevate your organization's information strategy.

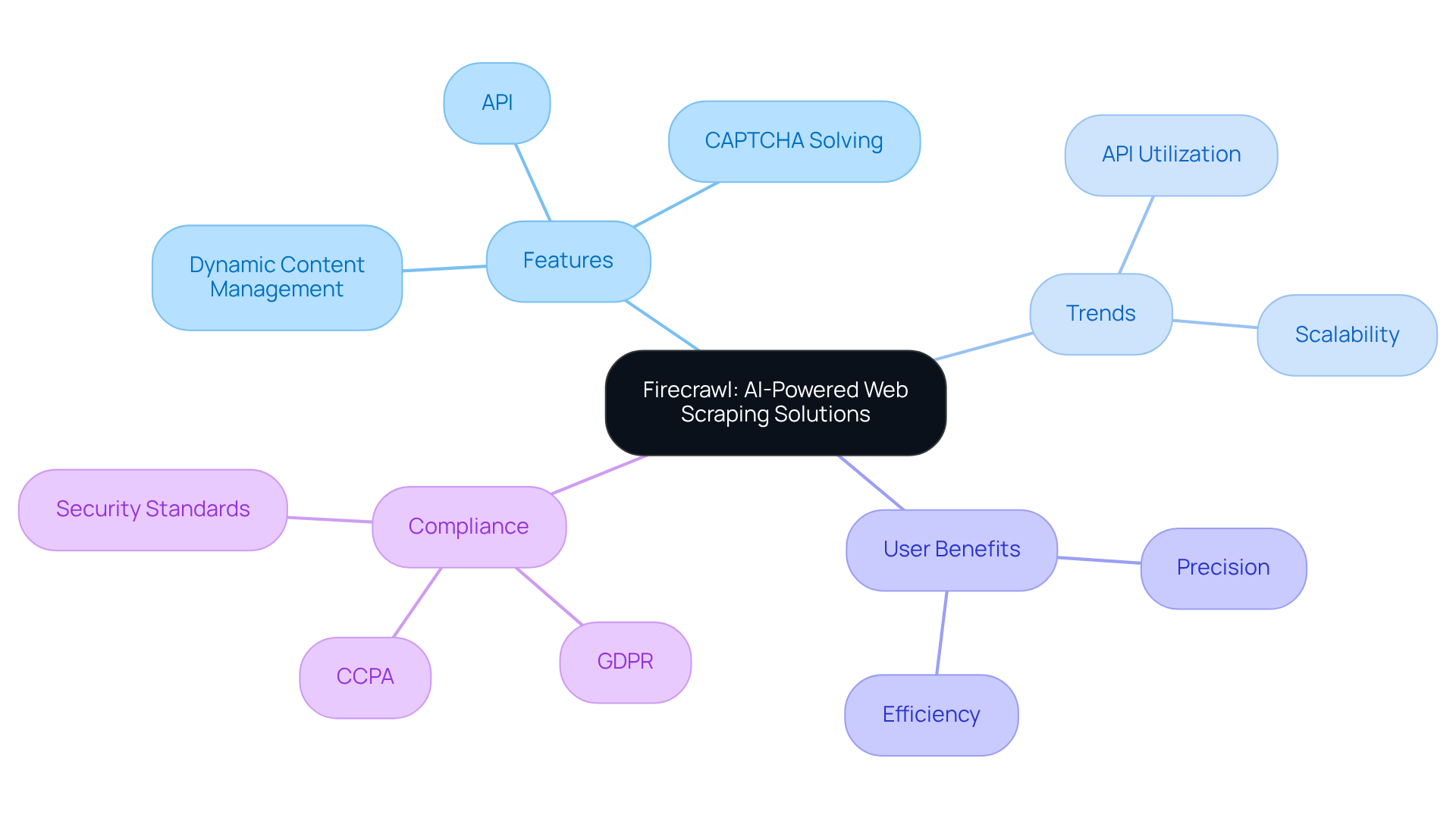

Firecrawl: AI-Powered Web Scraping Solutions

Firecrawl is an advanced AI-powered web scraping solution that excels at extracting structured information from any website. With its robust API, developers can efficiently scrape, crawl, and manage information gathering tasks. Key features include automatic CAPTCHA solving and dynamic content management, making it an ideal choice for companies eager to leverage AI for enhanced information retrieval processes.

Current trends indicate a growing reliance on API utilization for information retrieval tools. Many organizations recognize the effectiveness and scalability these solutions offer. Firecrawl exemplifies this shift, providing seamless integration capabilities that allow businesses to adapt quickly to changing information landscapes.

Companies using Firecrawl have reported significant improvements in their information gathering efforts. Organized information extraction has become more efficient and precise. As businesses increasingly adopt AI technologies, Firecrawl's innovative features position it as an essential tool for enhancing information strategies in 2026 and beyond.

However, alongside its technical capabilities, companies must navigate the legal frameworks surrounding AI web extraction, such as compliance with GDPR and CCPA. Firecrawl not only meets technical requirements but also aligns with ethical standards, similar to enterprise-grade solutions that prioritize security and compliance, including SOC2 certification and tailored support agreements. By skillfully balancing innovation with responsible information practices, Firecrawl stands out as a competitive solution against offerings like Websets, ensuring both compliance and advanced extraction capabilities.

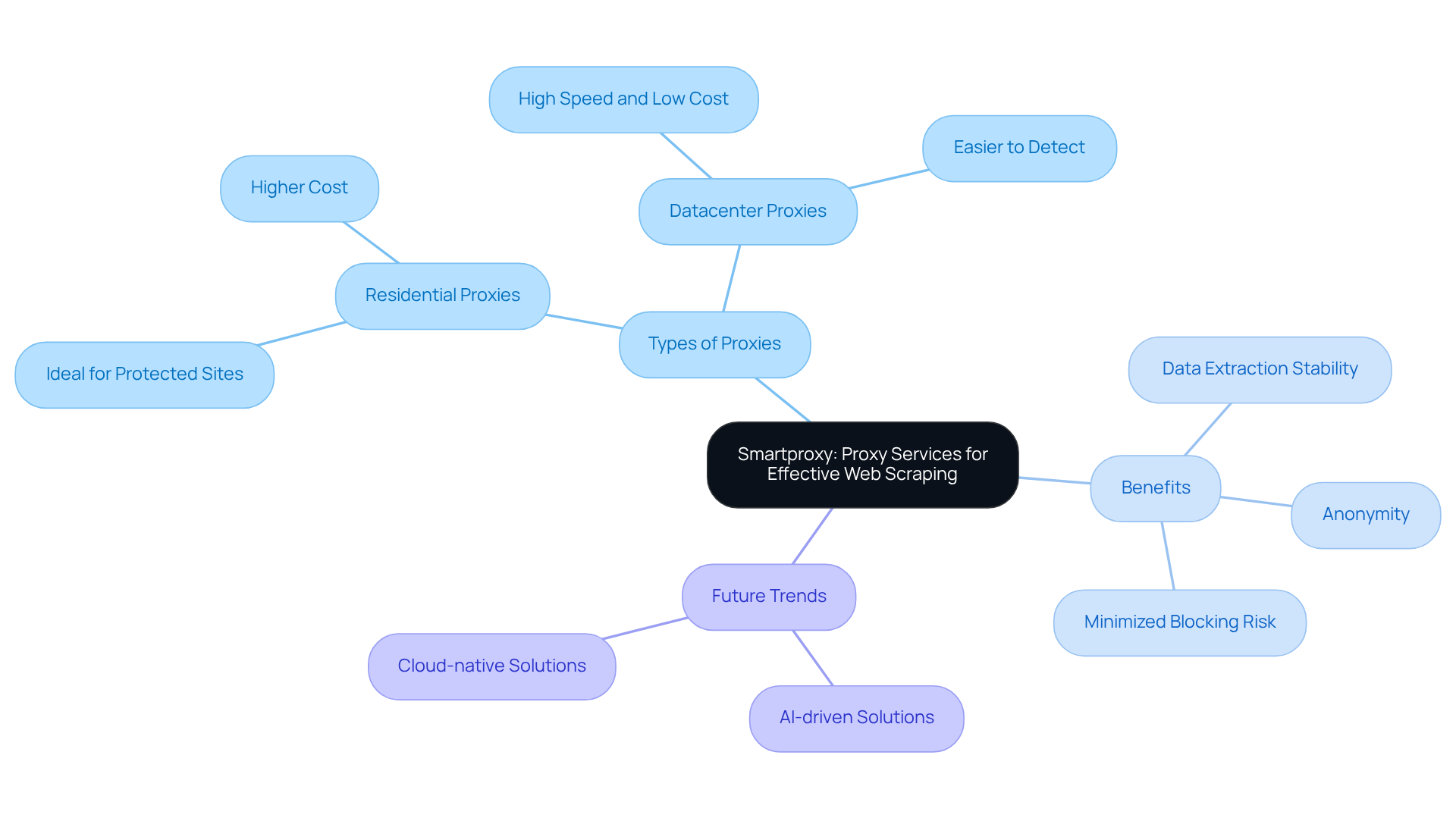

Smartproxy: Proxy Services for Effective Web Scraping

Smartproxy stands out with its extensive range of proxy services, expertly crafted to elevate your web data extraction efforts. With a robust network of both residential and datacenter proxies, Smartproxy empowers users to gather information from a multitude of websites while significantly minimizing the risk of being blocked. The platform's rotating proxy feature is a game-changer, enhancing anonymity and allowing users to navigate restrictions effortlessly.

As Nikolai Izoitko, Content Manager, aptly puts it, "Proxies enhance both anonymity and data extraction stability, reducing the risk of interruptions." This capability is vital for businesses striving to scrape data efficiently and securely, especially in a landscape where compliance and ethical data collection are non-negotiable.

Looking ahead to 2026, web scraping is evolving towards AI-driven and cloud-native solutions. This shift positions Smartproxy as an indispensable tool for companies across various sectors, including e-commerce and financial institutions. By integrating Smartproxy into their scraping strategies, businesses can ensure they remain competitive in a rapidly changing digital environment.

Conclusion

The landscape of web scraping tools is evolving at an unprecedented pace, offering businesses an array of powerful and diverse options. This article has spotlighted ten exceptional free web scraper tools for 2025, each meticulously designed to enhance lead generation and streamline data extraction processes. From AI-driven solutions like Websets and Diffbot to user-friendly platforms such as Octoparse and ParseHub, these tools cater to the diverse needs of sales teams and developers alike.

Key insights reveal the critical role of automation and efficiency in data gathering. Tools like Browserless and Puppeteer excel in navigating complex web environments, while Scrapy and Apify provide robust frameworks for scalable data extraction. The rise of AI-driven solutions, particularly Firecrawl and Diffbot, underscores the increasing demand for advanced capabilities in the competitive realm of information retrieval. By leveraging these tools, organizations can not only boost operational efficiency but also gain valuable insights that inform strategic decision-making.

As the digital landscape continues to expand, effectively harnessing data will be paramount for success. Embracing these innovative web scraping tools empowers businesses to outpace the competition, optimize lead generation strategies, and ultimately enhance overall performance. The future of data extraction is here; now is the time to explore these powerful resources and transform how information is gathered and utilized.

Frequently Asked Questions

What is Websets?

Websets is an AI-powered platform that specializes in B2B lead generation and candidate discovery, using advanced algorithms to help businesses find and connect with professionals or organizations effectively.

How does Websets enhance lead generation?

Websets allows users to filter extensive datasets and enrich search results with detailed information such as LinkedIn profiles, emails, and company details, optimizing lead generation processes for sales teams.

What features does Websets offer for its users?

Websets offers an API for complex queries, flexible high-capacity rate limits, and premium support tailored for enterprise needs, making it a valuable resource for market research and data enrichment.

What is Browserless?

Browserless is a managed headless browser service designed to extract data from complex websites, effectively bypassing bot detection and handling JavaScript-heavy pages for developers.

What are the key features of Browserless?

Key features of Browserless include auto-scaling and session management, which enhance operational efficiency and allow teams to focus on data extraction rather than infrastructure management.

Why is Browserless significant in the data extraction market?

The global market for web data extraction is projected to exceed USD 2 billion by 2030, indicating a growing demand for solutions like Browserless that facilitate automation and efficient information collection.

What is Scrapy?

Scrapy is a powerful open-source framework for web scraping and information extraction, allowing users to set specific rules for gathering data efficiently.

What advantages does Scrapy offer to developers?

Scrapy is backed by a robust community and comprehensive documentation, making it advantageous for developers to build scalable web scrapers that can manage multiple requests concurrently.

How is Scrapy evolving in the future?

Scrapy continues to evolve with updates enhancing its capabilities for dynamic web extraction and integration with advanced tools like ScrapFly for JavaScript rendering.

What is the significance of web extraction in today's digital landscape?

With 10.2% of global web traffic attributed to scrapers, web extraction is increasingly important, and tools like Scrapy are recognized as leading frameworks in the data-driven economy, as the market for web data extraction is projected to reach USD 2 billion by 2030.