Introduction

Web scraping has become an essential tool for businesses eager to tap into the vast reservoir of information available online. By automating data extraction from websites, organizations can uncover valuable insights that drive strategic decisions - from market research to competitive analysis. Yet, as the landscape shifts, so do the challenges of ethical compliance and technical obstacles.

How can businesses adeptly navigate these complexities to successfully scrape website data to Excel in 2025? This guide will explore the crucial steps, tools, and best practices that empower users to enhance their data extraction efforts while upholding ethical standards. Prepare to maximize your potential in the world of web scraping.

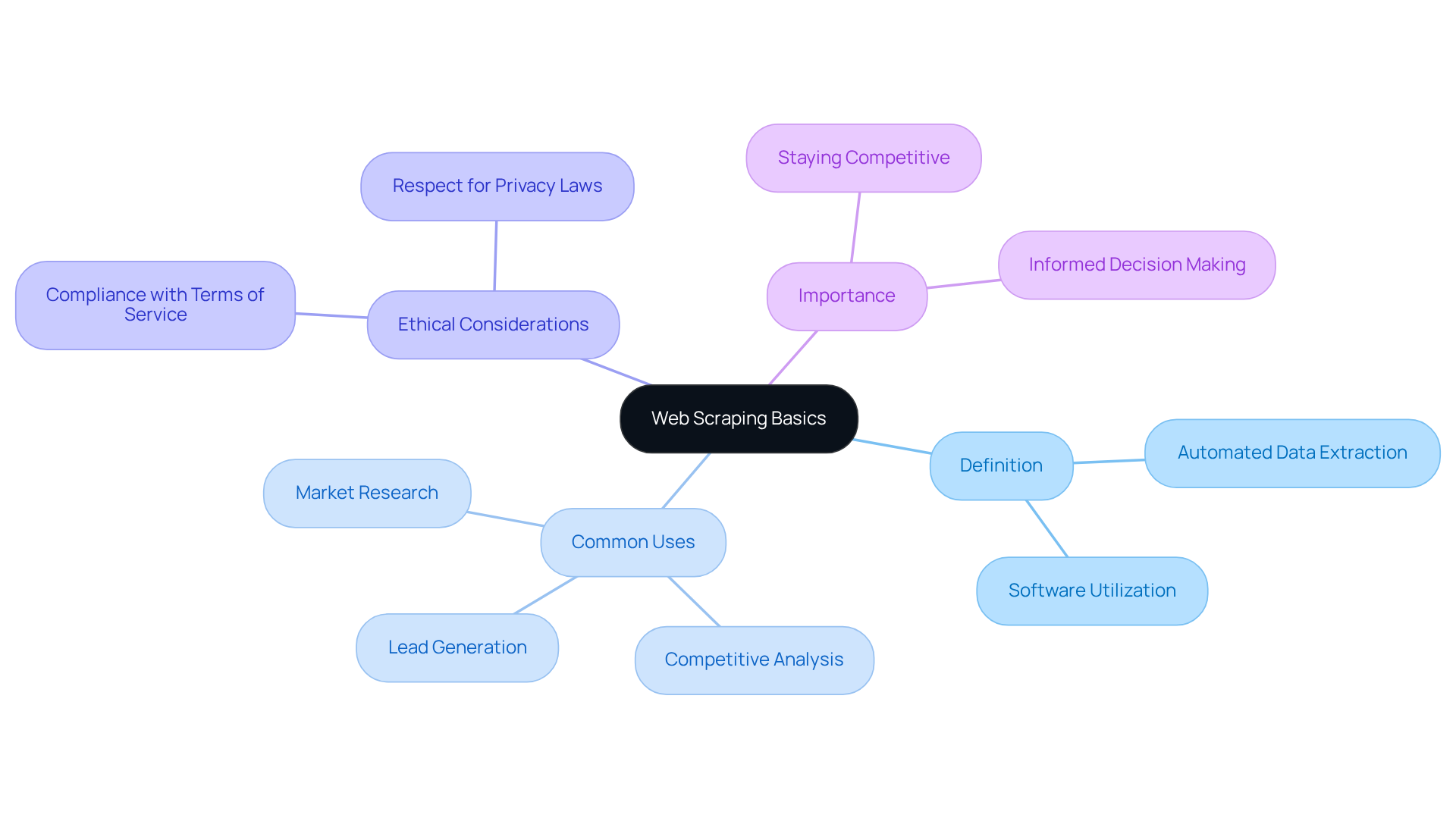

Understand Web Scraping Basics

Web harvesting is a powerful automated method for retrieving information from online sources. It involves fetching a web page and parsing its content to extract specific data. Understanding this process is crucial for leveraging the vast resources available on the internet.

Definition: Web scraping is the use of software to extract large amounts of data from websites, typically in HTML format. This technique allows businesses to gather valuable insights efficiently.

Common Uses: Companies utilize web scraping for various purposes, including:

- Market research

- Competitive analysis

- Lead generation

By harnessing this technology, organizations can stay ahead of the competition and make informed decisions.

Ethical Considerations: It’s essential to check the website's terms of service and robots.txt file to ensure compliance with legal and ethical standards. Adhering to these guidelines not only helps prevent potential legal issues but also promotes responsible information usage. Are you ready to explore the benefits of web scraping while maintaining ethical integrity?

Select the Right Tools for Data Scraping

Excel Power Query: This integrated Excel functionality is ideal for novices, as it allows users to scrape website data to Excel 2025 with ease. To scrape website data to Excel 2025, simply navigate to the Data tab, select 'Get Data', then 'From Web', and enter the URL. This straightforward process empowers users to effectively scrape website data to Excel 2025.

Octoparse: A no-code tool that stands out for its user-friendly visual interface, Octoparse simplifies the data extraction process from websites. It caters to those who prefer a coding-free experience, making it an excellent choice for busy professionals.

BeautifulSoup and Scrapy: For those comfortable with coding, these powerful Python libraries excel in extracting and parsing HTML content. They are particularly suited for more complex data extraction tasks, providing the flexibility and control that experienced users demand.

Browser Extensions: Tools like Web Scraper and Data Miner can be seamlessly integrated into browsers, offering quick and easy scraping solutions without the need for extensive setup. This convenience allows users to focus on their projects rather than technical hurdles.

Evaluate your project requirements and technical skills to select the most appropriate tool. The right choice can significantly enhance your data extraction capabilities.

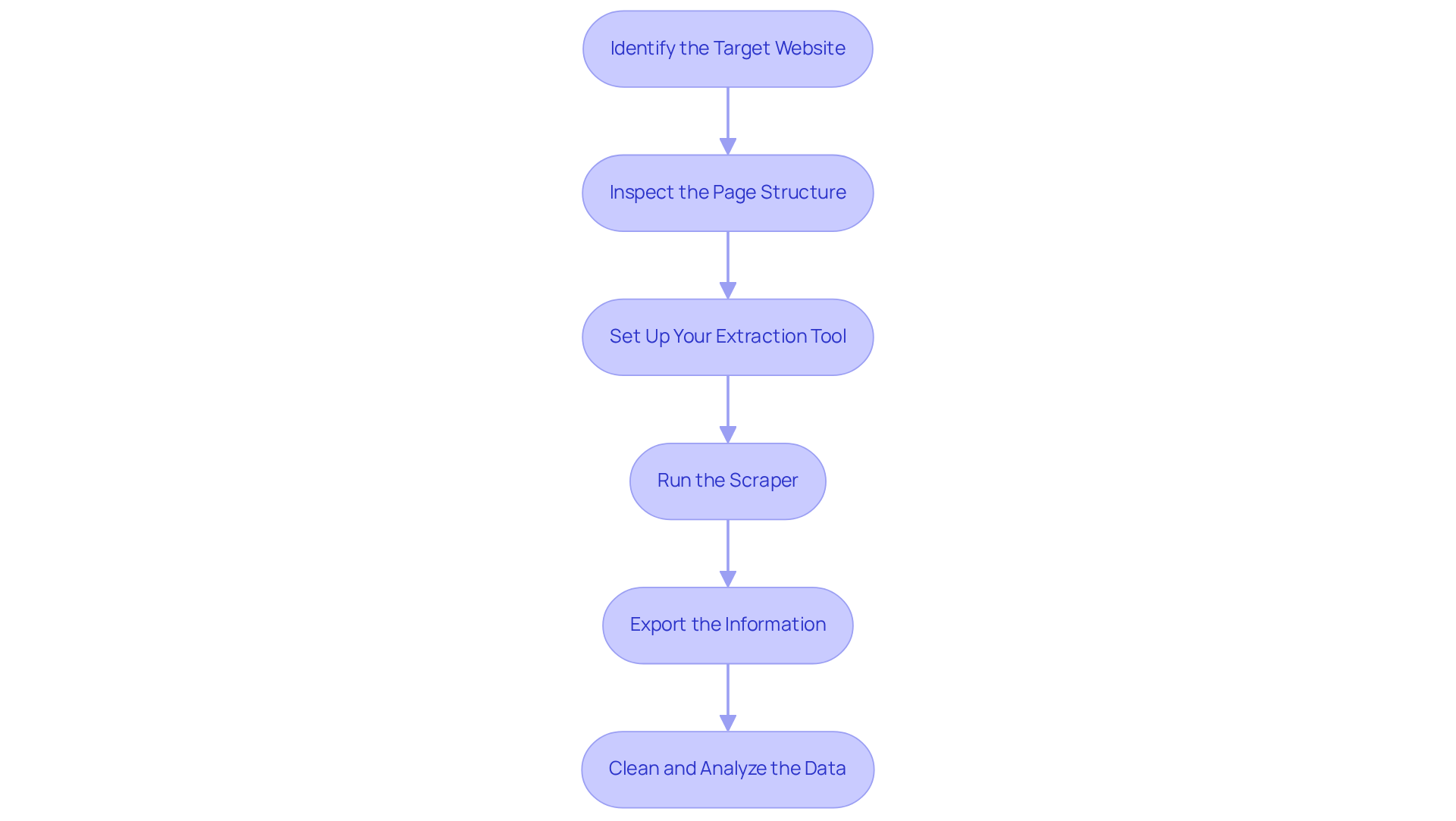

Execute the Data Scraping Process

To effectively scrape data from a website, follow these essential steps:

-

Identify the Target Website: Start by selecting a website that holds the information you need. It’s crucial to verify that data collection is permitted by reviewing the terms of service. This step helps you avoid potential legal complications.

-

Inspect the Page Structure: Use your browser's developer tools-just right-click and select 'Inspect'-to analyze the HTML layout of the page. Understanding the structure is vital; it allows you to pinpoint the specific elements containing the information you wish to extract. As Alejandro Loyola emphasizes, inspecting page structure is key for adapting to layout changes, making your extraction process more flexible.

-

Set Up Your Extraction Tool: Configure your chosen extraction tool to target the identified elements. For example, in Octoparse, you can click on elements directly to specify what information to scrape, streamlining your setup process.

-

Run the Scraper: Initiate the extraction process while closely monitoring the tool to ensure accurate information collection. If you’re using a coding approach, execute your script and stay alert for any errors that may arise during the process.

-

Export the information: After the extraction is complete, you can scrape website data to Excel 2025 to organize the gathered information into your preferred format. This step facilitates further analysis.

-

Clean and Analyze the Data: Once exported, clean the dataset to eliminate duplicates or irrelevant entries. Conduct an analysis to extract insights that align with your business objectives.

In 2026, the web data extraction market is projected to reach between $2.2 billion and $3.5 billion, underscoring its growing relevance. The landscape is evolving, with a focus on AI-driven solutions that enhance speed and accuracy. As companies increasingly adopt these technologies, understanding the fundamental steps of efficient data extraction remains crucial for success. Moreover, compliance factors are influencing scraping processes, necessitating responsible information collection practices to tackle the challenges posed by advancing anti-bot systems.

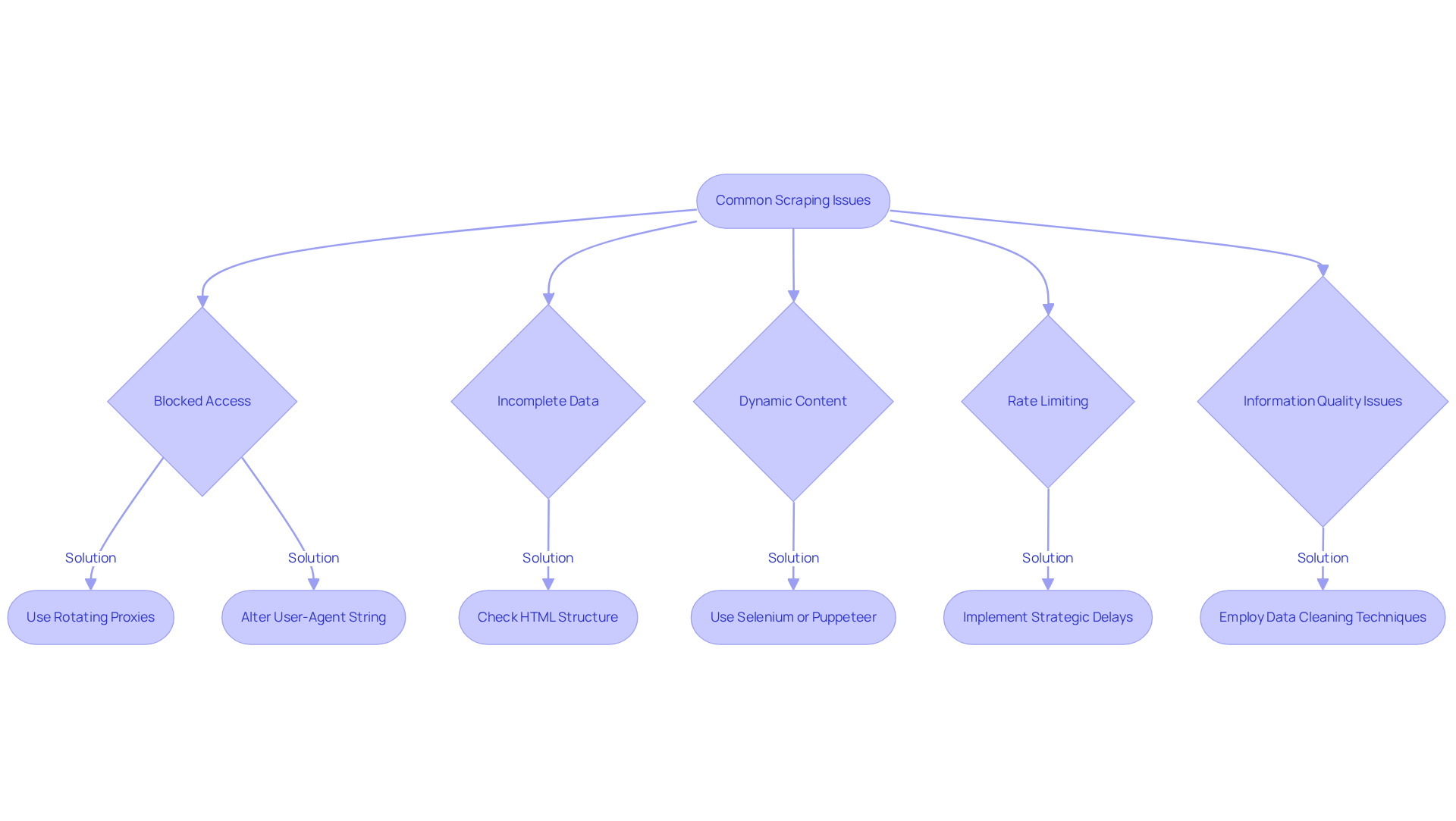

Troubleshoot Common Scraping Issues

Even seasoned scrapers encounter hurdles in their data extraction efforts. Here are some common challenges and effective strategies to troubleshoot them:

-

Blocked Access: A 403 Forbidden error often signals that the target website has implemented anti-scraping measures. To overcome this, consider using rotating proxies. This approach can significantly boost your chances of success by distributing requests across multiple IP addresses. Additionally, altering your user-agent string to mimic a standard browser can help you bypass these restrictions. In fact, statistics show that by 2026, bots will account for nearly half of all internet traffic, highlighting the necessity of rotating proxies for managing blocked access effectively.

-

Incomplete Data: If your scraper fails to capture all necessary information, it may be due to changes in the page's HTML structure. Regularly check the layout of the target site and adjust your extraction logic accordingly to ensure comprehensive data collection.

-

Dynamic Content: Many modern websites utilize JavaScript to load content dynamically, which can hinder traditional extraction techniques. In these cases, tools like Selenium or Puppeteer, capable of rendering JavaScript, are essential for obtaining fully rendered HTML and structured data.

-

Rate Limiting: If your data collection efforts face throttling, it’s vital to implement strategic delays between requests. This not only helps prevent your IP from being banned but also aligns with responsible scraping practices.

-

Information Quality Issues: After scraping, it’s crucial to ensure that the gathered information is clean and well-structured. Employ data cleaning techniques to eliminate duplicates and irrelevant entries, thereby enhancing the quality of your analysis.

As Karan Sharma aptly notes, 'Your goal isn’t zero errors: it’s fast containment.' This underscores the importance of proactive measures in web harvesting. By remaining adaptable and employing these strategies, you can significantly enhance your scraping operations and effectively navigate common obstacles.

Conclusion

Mastering the art of web scraping to extract data into Excel 2025 opens up a world of possibilities for businesses and individuals alike. Understanding the fundamentals of web harvesting, selecting the right tools, and executing a well-planned scraping process allows users to efficiently gather and analyze valuable information from various online sources. This guide underscores the importance of ethical considerations and compliance, ensuring that data collection practices remain responsible and legally sound.

Key strategies for successful web scraping have been highlighted throughout the article. From choosing user-friendly tools like Excel Power Query and Octoparse for beginners to leveraging powerful coding libraries such as BeautifulSoup and Scrapy for advanced users, the right approach hinges on the individual’s skill level and project requirements. Additionally, troubleshooting common issues - like blocked access and dynamic content - is crucial for maintaining the integrity of the data extraction process.

As the landscape of web scraping evolves with advancements in technology and increasing regulations, staying informed about best practices and emerging trends is essential. By embracing these techniques and focusing on ethical data collection, users can harness the power of web scraping to drive informed decision-making and gain a competitive edge in their respective fields. Remember, the journey into web scraping is not just about gathering data; it’s about unlocking insights that can lead to transformative outcomes.

Frequently Asked Questions

What is web scraping?

Web scraping is the use of software to extract large amounts of data from websites, typically in HTML format. It allows businesses to gather valuable insights efficiently.

What are the common uses of web scraping?

Common uses of web scraping include market research, competitive analysis, and lead generation.

Why is understanding web scraping important?

Understanding web scraping is crucial for leveraging the vast resources available on the internet and for making informed business decisions.

What ethical considerations should be taken into account when web scraping?

It is essential to check the website's terms of service and robots.txt file to ensure compliance with legal and ethical standards, which helps prevent potential legal issues and promotes responsible information usage.